Multi-vendor data management Schlumberger's ProSource

Multi-vendor data management Schlumberger's ProSource

Shell is in the throes of (yet another) global reorganization. This sets out to re-engineer the way the company does its business with a focus on cross-border optimization through portfolio management. A new standard software environment from Schlumberger both reflects and underpins the new globalization strategy.

SIS

Shell has awarded Schlumberger Information Solutions (SIS) a multi-year, multi-product software master agreement whereby SIS’s E&P application software will form an important part of the Shell Global Standard Software Portfolio—a common set of software tools approved for use by Shell’s affiliates worldwide.

Ching

Shell EP Technology director Paul Ching said, “SIS solutions will help Shell achieve global standardization, especially in data management and static modeling. Combined with other vendor tools and our own proprietary software they will ensure that our technology capability remains at the forefront of the industry”.

Toma

SIS president Ihab Toma added, “This agreement lays a common foundation for SIS to provide its leading-edge software technology, including the ‘Living Model’, our open 3D model-centric seismic-to-simulation workflow, to Shell and its E&P business affiliates worldwide. In addition, it provides the opportunity for cooperative developments to meet Shell’s long-term technology needs.”

.NET

The agreement includes information management, geoscience, reservoir simulation, well and production engineering, and economics solutions. The deal allows Shell to integrate its proprietary tools through Schlumberger’s infrastructure OpenSpirit, and software dev kits for GeoFrame and the emerging ‘Project Ocean’. This is a brand-new framework based on Microsoft’s .NET technology.

LBP

SIS economics and financial planning solutions—the ‘Living Business Plan’ will form the basis for Shell’s worldwide capital allocation program and allow for secure Web-based collection of regional investment plans, their global rollup and optimization of key business measures.

See our report in this issue from the Schlumberger Miami Forum for more on Shell’s portfolio management and on Project Ocean.

Norwegian Scandpower has spun-off its Petroleum Technology unit to a venture capital fund Energivekst AS. Scandpower Petroleum Technology (SPT) management is participating in the acquisition and will become part-owners of the company.

Olga 2000

The game plan is to leverage SPT’s market-leading OLGA 2000 transient, multiphase flow modeling package with accelerated penetration in existing and new markets. Plans are also afoot to broaden SPT’s range of products and services while retaining the focus on flow modeling.

92 employees

SPT has 92 employees, with head office in Oslo and sales offices in Houston, London, Perth, Dubai, Milan, and Hamburg. SPT turnover has grown from NOK 35 million in 1999 to NOK 89 million in 2002.

Mepo

Other SPT software includes reservoir management (Ipos), history matching (Mepo) and a postprocessing tool for Eclipse(ECLpost). Most recently SPT has developed a simulator for underbalanced drilling, powered by OLGA 2000.

If you like coffee and are of an obsessive nature I strongly recommend that you read no further. My new hobby is espresso and I don’t mean popping into Starbucks occasionally. I mean using a vast range of appliances at home in a never ending quest for the holy grail—the ‘perfect shot’. After my recent trip to the Denver SPE ACTE—where optimization is all the rage—it struck me that that was exactly what I was doing in my search for coffee nirvana.

Green beans

To give you an idea of the extent of my malady, let me walk you through the process. First, get your beans—‘variable’ number one. These have to be green, un-roasted as bought from obscure boutiques on the net, selecting a variety, blend and origin. Next roast ’em—another variable, coupled to the first. The degree of roast must be tuned both to the type of coffee and to the desired result.

Hard grind

When the roast has cooled, the grind setting comes into play. Again, this is a variable that needs incessant tweaking—to match upstream (beans/roast) choices and downstream—desired results. A few more hurdles before the tasting—the tamp of the coffee in the espresso brew head—and the timing of the pour. Some really serious home baristas add a PID controller to allow for super-fine brew temperature regulation.

Start-over

You can then savor your shot—or more likely throw it away if you are a beginner—and start over, tweaking one variable or another in the never ending search for perfection. As you can imagine with so many variables to play with, it is frequently hard to know which ones to tweak to fix a particular deficiency. After a shot or two it can be hard to remember what you tweaked last time.

ACTE

Sitting through a demo of a modeling tool at the SPE ACTE I was reminded of my coffee quest. Reservoir modeling, like espresso making is an optimization process where multiple variables and scenarios are adjusted to achieve a desired result.

Forms, forms

What struck me at the SPE ACTE, as the nth data entry form popped up and the mth menu dropped down, was an undue emphasis on visualizing model results. Most of the input assumptions are obscured to the subsequent users of the model. Maybe there is room for better visualization and management of input tweaks. This would facilitate ‘assumption-mining’ rather than data mining, although tools such as those on display from SpotFire would seem well suited to this exercise.

Virtual hyperspace

We have a ‘knowledge management’ issue here. By making all input accessible in some virtual hyperspace, model evaluators would get used to the ‘shapes’ that tried and tested parameter selections represent—and could quickly spot anomalous and unlikely choices. Perhaps future managers will massage the input with haptic devices—or maybe I had too much coffee this morning. Whatever.

Aspen

After Denver we were invited to attend the AspenTech user group meeting right here in Paris. More on this—and on the SPE later. But this meeting tied up a few loose ends in our quest for illumination in simulation and optimization—the big buzz at the SPE. There is a headlong dash underway in the industry to implement the ‘e-field’—with technology transfer underway from refining to the upstream and an inevitable culture clash.

Culture clash

The first thing we had to grasp is the huge difference between process modeling at the refinery—and modeling the reservoir. Refiners model processes they understand. Any discrepancy between the model and the facts can be fixed by looking at some of the plethoric measurements of the process itself.

Poor relation

Reservoir modeling is the poor relation of process modeling. Here parameters are tweaked so that the model fits a limited data set. ‘History matching’ is widely used to establish the model and here I bring you an interesting aside from an book review that appeared recently in EOS*. Reviewing Hugh Gauch’s book—Scientific Method in Practice**, Gerard Fryer of the University of Hawaii writes that Gauch ‘correctly argues that a model cannot be judged from its performance in predicting the data that were used to fit it in the first place’.

Objective?

That pretty well destroys most ‘history matching’ in one fell swoop. But according to Fryer, there are ways to judge model performance objectively—‘Statistical efficiency, Akaike Information Criterion and Schwarz’s Bayesian Criterion’ to name-drop but three. I’m not sure how widely these objective criteria are applied to oilfield models. Suddenly though, with sim opt, culture clash, data mining and statistical certainty (?) all looming on the horizon, reservoir management looks as though it is undergoing something of a renaissance. Like I said—more on these fascinating developments next month.

PID?

By the way, some of you may be wondering what a ‘PID controller’ is. My researchers tell me that is a ‘Proportional, Derivative, Integral’ device. Not sure what that means—but they are all over refineries!

*EOS Transactions of the AGU Vol 84, N° 36 September 2003.

**Cambridge University Press. ISBN 0-521-01708-4.

Oil ITJ—what is HP’s new energy business unit up to in oil and gas?

Ferling—HP’s worldwide energy industry unit was created after the HP/Compaq merger as a division of our manufacturing business. We have major corporate accounts with Shell, ExxonMobil, Schlumberger and others—also through channel partners.

Oil ITJ—What does HP do for its oil and gas clients?

Ferling—In the oil business, technology change is the name of the game particularly with the advent of Linux and Windows in high performance computing. We partnered with Schlumberger on the port of Eclipse to Itanium 2 and 64-bit Linux – with a massive hike in compute power. We also helped build BP’s Houston-located supercomputer – which is 3 times faster than the old machine with 7.5 teraflops of compute power and 8 terabytes of memory. We also work with Paradigm, Roxar and Petrel on certification.

Oil ITJ—Is that a big part of your activity, certifying software for your hardware?

Ferling—HP understands compilers! There was a considerable technology transfer from HP to Intel during the development of the Itanium 2. But our involvement is also at a high-level—particularly with the new ‘adaptive computing’ strategy.

Oil ITJ—What’s adaptive computing?

Ferling—We looked at the way changes in our clients business interacted with their IT. We found that it takes seven times longer to effect IT changes as it does to change the business. So an agile IT infrastructure can make a difference. Today a business change may mean buying a new server and then integrating it into the existing infrastructure. We want to get away from this equation. HP’s agile infrastructure separates a business process layer from an application layer and an infrastructure layer. A process change should trigger a change in IT.

Oil ITJ—Have you deployed this technology yet?

Ferling—We already have two real world implementations. The universal data center (UDC) virtualizes all IT network and storage infrastructure and services. Our Intelligent software allows for drag and drop configuration of such virtualized services for resource allocation. You can add new users, email accounts or define resources.

Oil ITJ—What about ‘agility’ in oil and gas?

Ferling—We are in discussion with oil and gas clients on agile infrastructure. They are also very interested by our joint venture with Microsoft – the collaborative business infrastructure (CBI.NET). We see CBI.NET getting traction in environments such as the digital oilfield of the future (DOFF)—supporting real time drilling operations and closed loop production. CBI.NET leverages Microsoft’s .Net architecture and BizTalk to integrate various data sources into a joint view. SCADA sources are mapped to XML. One gas producer client used this environment to reconcile volume differences across multiple production accounting systems.

Oil ITJ—Sounds like Tibco?

Ferling—Yes it is. The project began five years ago in HP’s own manufacturing as the value collaboration network. HP’s consultants and systems integrators have been working on using the CBI.NET infrastructure for supply chain integration.

Oil ITJ—Which brings us to the subject of e-commerce – where is it working?

Ferling—E-commerce is very important for oil and gas traders and for retail gas station operations – where there is a real supply chain. Service stations represent a low margin business at best – in some countries were there is a fixed price – this can be a negative margin business. Many companies are now looking to make their money on the convenience store end of the business. HP has been talking to marketing managers about appropriate technology for gas stations. This has led to our E-tropolis product for retail.

Oil ITJ—So you are moving in on SAP & Accenture!

Ferling [laughs]—Not exactly. SAP is a very big partner of ours—we work closely with them. There is no major overlap—on the contrary, I’d say that it’s hard to find a closer partnership. In systems integration there are always lots of partnerships—even though HP has no problem taking on big deals itself. Usually the different parties contribute different skill sets—this varies from deal to deal and from country to country. HP tries to understand who is providing the business value. For instance we developed an Enterprise Portal was developed for a gas station chain’s convenience stores. Here we had to evaluate partners for B2B, B2C etc..

Oil ITJ—We know what Schlumberger does, what Landmark does but what exactly is HP’s oil & gas value proposition—isn’t this all a bit diffuse?

Ferling—Not at all! Our core is the adaptive enterprise—agility, cost savings, help desks. We support big companies with tens of thousands of PC’s. At this size of deployment, worldwide support can only be provided by a world-wide company. We manage the whole client environment. In fact, along with every contract proposal we do due diligence and tell the client how much can be saved. HP is 130,000 people – with around 65,000 in services – the third largest IT service company in the world; the largest in Microsoft based enterprise computing and in mission critical.

Oil ITJ—What synergies have accrued from the merger?

Ferling—We have made serious savings and now we all use each others’ technologies. Combined, HP is now the second largest IT company in world. This gets a lot of pull from corporate accounts. Incidentally, during the merger some serious pre-merger planning meant that we had integration from day one. For instance there were immediately 200,000 mail addresses up and running. People integration takes longer because of culture and local environments. But in the present oil and gas division I don’t even know which company many of the folks came from.

Paradigm Geotechnology (formerly Paradigm Geophysical) has purchased four IBM clusters for its seismic imaging activity. The four ‘Blue Gold’ supercomputers are located in Paradigm’s Houston, UK, Russian and Indian offices. IBM’s global support was instrumental in Paradigm’s choice. Each unit comprises ‘several hundred’ IBM BladeCenter nodes running Linux on 3.06 GHz Xeon processors.

Barr

Paradigm president and COO Elie Barr said, “Our ‘Focus’ seismic processing software, GeoDepth velocity modeling and depth imaging system, and Earth Domain Imaging migrations are extremely compute-intensive. The IBM BladeCenter system enables us to complete our projects in a timely manner.”

De Groot-Bril (dGB) is to open-up the source code for its d-Tect seismic processing and pattern recognition software. Under a new license, OpendTect source code and compiled versions can be used free of charge for R&D purposes; commercial use the software and derived products will be subject to a ‘modest end-user maintenance and support fee’. Universities, software vendors, private consultants and E&P companies can use OpendTect to build commercial or not-for-profit seismic interpretation modules through an easy-to-use plug-in design.

Plug-in

The first commercial plug-ins vendor is – guess who - dGB itself! dTect has been repackaged as three OpendTech plug ins for dip-steering, neural networks and data access. dGB will maintain and extend the OpendTect environment and will continue to develop and market additional commercial plug-ins including a seismic interpretation module. dGB’s ‘GDI’ quantitative interpretation tool will be ported to the new environment. OpendTect will be released via the internet ‘without license managing restrictions’ next month.

UK-based EarthModels Ltd. is to offer its Chronos family of reservoir modeling programs via the Petris Winds ASP service. Chronos ‘fills the gap between full-blown geocellular modeling and pencil-and-paper’. The tool was designed for quick-look evaluation and ranking of subsurface options such as step-out or infill well locations, and for prospect appraisal. EarthModels will initially offer its Chronos 3D geological reservoir modeling program based on a ‘gridless geostatistical realization’. ChronoSeis - a model-based 4D seismic analysis tool – will be offered at a later date.

Gawith

EarthModels’ MD David Gawith said, “Our alliance with Petris will help us reach a wider market. Chronos was designed with Internet-based teamwork in mind and is ideally suited to ASP. These Web-based applications will help oil & gas producers reduce their internal support requirements and extend the life of existing hardware.”

Landmark has just released Drilling Desktop (DD), its new integrated platform for well planning and drilling operations. DD supports well design, real-time data access and 3D visualization in a shared data management environment.

Lane

Landmark CEO Andy Lane said, “Our focus is on the challenges of long-reach horizontal wells, deep-water drilling and small reservoirs. Solutions like Drilling Desktop will enable operators to drill safer and better-engineered wellbores.”

Engineer’s Desktop

The platform is said to optimize drilling engineering workflows, to reduce cycle-time and increase data integrity. Drilling Desktop is a component of the Engineers Desktop, a common project management system that combines drilling/production data and analytical tools. The full offering will be available in December 2003.

Roth

Murray Roth, Landmark’s VP marketing added, “This new integration platform links engineering software and drilling operations—making for efficient well design, increased production and reduced costs.” DD also supports drill string and casing run analysis workflows and provides a level of integration hitherto only available to geoscientists.

China Petroleum and Chemical Corporation (Sinopec) has selected Cisco systems for a major upgrade of its network. The solution is based on Cisco 7600 and 3600 series routers. Sinopec will deploy other Cisco services and applications, including H.323 multimedia conferencing, virtual private networking and IP telephony.

Broadband

The network upgrade will enable the integration of multiple applications and connect hundreds of Sinopec subsidiaries. The broadband network will centralize Sinopec’s ERP systems and automate office administration and data analysis.

Cheung

Cisco VP Fredy Cheung said, “To help our customers we share our experience gained with their international peers in the oil and chemical industry. Early Cisco involvement can improve an organization’s business model and competitive strength.”

ESRI’s ArcIMS web-based map server is proving a popular means of serving GIS data over the web. But the vanilla ArcIMS client has several shortcomings in a production environment. Exprodat’s explosively named ‘NitroView’ is set to fix these issues.

Souped-up

NitroView is a souped-up version of ESRI’s ArcIMS HTML viewer which supports interactive map layout, scaled hardcopy and a spatial toolkit. NitroView also talks to ArcView 3.x and 8.x data which can be viewed along with IMS data.

Branding

NitroView can be branded with a corporate logo and marginalia customized to corporate standards. Exprodat claims the new product provides a ‘functional GIS solution for all users—reducing the need for desktop GIS deployment’.

Speaking at the London Petrophysical Society last month, Petrolink’s Operations Manager Jon Curtis described a real-world deployment of the emerging WITSML data standard.

PowerStream

Petrolink’s PowerStream supports communication of real-time well data from the rig to corporate intranets via PetroLink’s secure servers. PowerStream leverages the WITSML log data standard – an update of the WITS (Wellsite Information Transfer Specification) which has been in use for several years.

Neutral

Petrolink’s PowerStream Server is based on the published WITSML schemas and covers the majority of data acquired at the wellsite. PowerStream operates as a neutral interface between rig-based acquisition companies and automates transfer of data between different networks.

WellLogML

Curtis showed how other XML-based formats such as WellLogML and LogGraphicsML can be leveraged to plot WITS, WITSML and other data formats. Output formats can be tuned to a variety of uses – including daily reports, well schemas, geological plots and log curves.

Some like to pontificate on the ageing workforce and the decline in upstream student intake. ChevronTexaco Corp. (CTC) is doing something about it. Hot on the heels of setting up a ‘smart field’ R&D center at the University of Southern California (see Oil ITJ Vol. 8 n° 9), the company is now funding a new ‘Center of Research Excellence in Earth Science’ at the Colorado School of Mines. The center’s co-executive directors will be John Hebberger, research manager at CTC and CSM distinguished scientist Chuck Kluth.

Subsurface

The center will develop advanced technologies to improve interpretation of subsurface geology through computer modeling. R&D will center on integrated technologies targeted to deepwater exploration. CTC employees will participate in the program and provide real-world geological data from oil and gas fields from around the world.

World-wide

The CRE will support a strong educational component drawing top graduate students from around the world. The program at CSM will also train existing CTC employees in innovative petroleum exploration techniques and strategies.

Fluid flow

The center is actually the third CTC funded R&D unit. In December 2001, the company established a Center of Research Excellence in production fluid flow at the University of Tulsa where research focuses emulsions and multiphase flow, dispersions and heavy-oil chemistry.

Peter Goode—the (very) new boss of the post-Sema divestment Schlumberger Information Solutions is unequivocal—the New SIS is going to ‘revolutionize’ E&P core operations and processes by leveraging Information Technology. This is necessary because of a squeeze from demand-side growth and supply-side constraints such as competition for capital, regulations, demographics, security and transparency. Ihab Toma, now VP of sales and consulting, is ready to fulfill client’s needs in workflow, capital planning and real-time operations. SIS’ IT unit has a revenue forecast of over $900 million for 2003. The restructuring and rebrand of SIS, which began two years ago, is bearing fruit with InfoStream-based workflows, ‘Formula One’ visualization and the Petrel ‘Living Model’.

Drilling ‘blind’

Toma forecasts a huge increase in the number of reservoirs benefiting from numerical simulation thanks to economical, PC-based simulation. A similar opportunity exists in real-time directional drilling—90% of which is still carried-out ‘blind’. Continuing the dynamic theme, Toma underlined the importance of the recent deal with Aspentech and the Living Business Plan which crosses the divide between technology and finance. Toma also unveiled Project Ocean—a brand new integration framework for pretty well all SIS applications which will be a ‘state-of the art’ Microsoft .Net development.

ExxonMobil

ExxonMobil Upstream Technical Computing VP Steve Comstock’s ‘proposition’ is that standard systems create more enterprise value than multiple systems customized for each asset. By sharing databases, centers of expertise and best practices, Exxon assures transferable learnings and maximum integration. Getting ‘common’ is better than getting ‘best’. ‘We want petrophysicists around the world to use one tool—not ten as before’. ‘Common’ has saved ExxonMobil around $100 million (around 30%) on its annual spend on technology solutions (hardware, software and data management). 50% of this saving has been in the cost of data management. Today ‘G&G folks do G&G—not data management’. But the relationship between SIS and ExxonMobil has not always been a bed of roses. Comstock had kicked-off his address with a heartfelt plea to SIS to ‘fix GeoFrame 4’—a sentiment seemingly shared by other SIS clients. But in his summing-up, Comstock was conciliatory—‘We’ll fix it together!’

Pioneer

Tom Halbouty described Pioneer’s ‘PioneerNet’ Portal as facilitating interaction between a worldwide workforce and as providing efficiency through improved information access. The system is built on DecisionPoint and the Plumtree Portal. Schlumberger was selected because Pioneer ‘did not want to have to educate a general solution provider in upstream domain knowledge’. The portal was developed in three months—covering production, authorization for expenditure (AFE) reporting, application launching and score cards. Challenges included getting users to migrate from email to the portal. Keeping content fresh and complying with records retention policy were also critical.

Tessier

Carole Tessier provided a close-up of the PioneerNet with data roll-up from Landmark’s TOW/CS, FieldView and Aries. Forecast vs. actual differences are highlighted in a cut down version of Schlumberger’s OilField Manager. Alerts can be emailed to field workers on status change. AFE tracking has brought ‘tears of joy’ to worker’s eyes! Pioneer also uses one of the first components of Schlumberger’s Project Ocean—the Results DB—to track acquisition and divestment opportunities. Tessier announced a new ‘E&P Portal Consortium’—led by Pioneer, Occidental and 8 other companies.

Shell

According to Sven Kramer, Shell International E&P Portfolio Manager, attention focused on portfolio management when the 1998 drilling program ‘overspent and under-produced’. A program was initiated to implement ‘cost leadership’ by comparing asset performance. Plots of cumulated project value against cumulative expenditure—the ‘unconstrained creaming curve’—are used to rank opportunities. The analysis showed that some commitment wells can be great ‘destroyers of value’. All Shell units now use the same global standards for data and evaluation. Data is input in standard form to a capital allocation database. Analysis is performed with CapIT—a Shell-tailored edition of Merak. Reporting uses Business Objects. The system is migrating towards the Schlumberger Living Business Plan (LBP) and a central global database.

Total

Philippe Baldy traced Total’s two mega mergers—first with Fina, then with Elf and their impact on data management. Logs were all in LogDB, but with many redundancies. Finder was used for well log reference data. Topographic data was spread over five different systems. A Finder-based navigation/topography fusion project is underway. Moving topography into Finder is a 13 man-year project. Migrating physical asset data another 11 man-years. Total now has around a terabyte of data in LogDB; is this a record? Total found that data matching took around 50% of its data management effort and noted that it is hard to eliminate redundancies completely. Total’s vision is to let subsidiaries have responsibility and management of data. Head office manages ‘orphan’ data, key corporate data and sets standards.

Decision Point

Bill Baski asked ‘why choose SIS’ portal solution Decision Point?’ The answer is because of its E&P-oriented workflows and ‘multiple integrated’ domain databases. SIS has a few ‘competitors’ in upstream portals. There are the ‘big four’ systems integrators (Accenture etc.)—but these ‘lack domain expertise’. So you could try internal development—except ‘you don’t have the head count or best practices’. Baski was dismissive of a hypothetical challenge from ‘Bill and Ted’s Excellent Java Shoppe’ but more concerned about ‘confusion’—the ‘real obstacle’ to portal deployment.

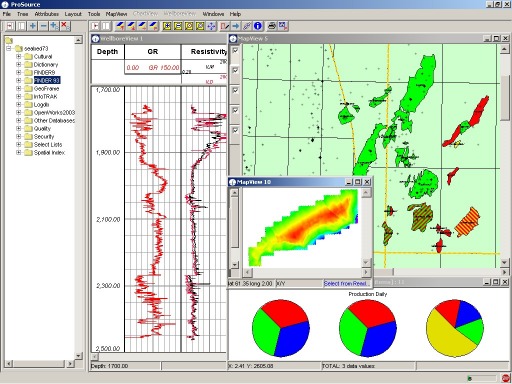

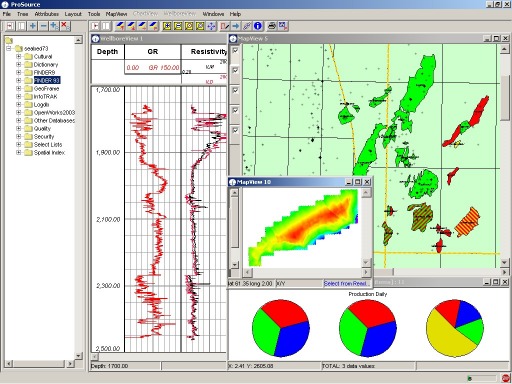

ProSource

Nicholas Lillios introduced ProSource as ‘data management for professional data managers’. ProSource offers a single interface to Finder, GeoFrame, OpenWorks, SeisDB, LogDB, GWIS, InfoTrack, SeisManager and AssetDB. ProSource maps and aggregates information and provides QC, charting options, plotting, data loading and transfer. Written in Java, ProSource is platform independent. ProSource allows for browsing and editing of data in sources repositories (i.e. you can change data in say a GeoFrame project from ProSource). A demo showed a rather laborious edit of a checkshot survey bust. ProSource sessions can be ‘unplugged’ for offline work—with edits committed on re-connection. Surprisingly, ProSource does not log/track its edits.

eSearch

Steve Darnell (Iron Mountain) and Trey Broussard (SIS) described their new corporate partnership which is to combine eSearch V1.0 with AssetDB V3.1 into eSearch V2.0. eSearch will provide physical and electronic document management with full text search. eSearch development will now be done by Iron Mountain in Java, with a Schlumberger-developed E&P data structure. The tool offers profile-based security with Oracle row-level locking, multiple internal and external storage locations and a published API. A ‘Google-like’ search works across all locations.

InfoTrack

InfoTrack, the ‘Results Database’ stores project milestones and final interpretations—along with interpretation quality and ‘context’. Results are stored in a ‘vendor-neutral, version-neutral’ format. Other components of the new SIS data architecture are the ProSource front-end (see above) and the Integration Framework—a.k.a. the ‘Federator’. The InfoTrack data transfer module (DTM) applies business rules to ensure consistent transfer with automated post processing and logging. A demo involving a DexaNet connection to Stavanger and Houston involved a lot of waiting around for the network and staring at text boxes. Data management is not yet truly sexy!

RPM AI Well Planner

RPM applies what is best described as artificial intelligence to the well planning process, and is also the first showcase for Schlumberger’s Project Ocean—see above. RPM computes well trajectories from a 3D mechanical earth model. Inputs are pore pressure, fracture gradient and rock strength. The program computes multiple casing design scenarios, cement programs, borehole assemblies, bits etc. RPM is stand-alone currently—next year it will run off a brand new ‘Ocean’ database.

Petrel

Petrel continues to impress with spectacular new functionality in seismic visualization and 2D/3D interpretation. Fancy grid editing, contouring and scale maps and montage suggest Petrel has made it to mainstream interpretation. New tools for attribute extraction, geobody extraction and connectivity analysis combine into a veritable tour de force.

Future

SIS has been assailed with queries as to the future of Finder. Dwight Smith spelled it out—‘folks should understand that Finder is not going away and is to remain a key element in SIS’ data strategy’. ProSource and DecisionPoint will ultimately replace Finder’s data management tools—with data access going through the Integration layer. SmartMap will be turned off in 2/3 years time but there will be other SDE-based tools. Finder and GeoFrame data models will be harmonized.

Visualization

3D ‘stand-alone’ visualization remains a hard sell—it’s easier to bundle visualization technology with a product—as in Petrel. SIS’ visualization tactics have been to acquire VoxelVision, to partner with Hue AS (innovative rendering and smooth interaction), OpenInventor and HP/SGI for hardware. Inside Reality offers full immersion and remote collaboration.

This is an edited version of a 10 page, illustrated report from the SIS Miami Forum produced as part of The Data Room’s Technology Watch Reporting Service. For info on this service please email tw@oilit.com.

Adrian Smith is to manage Hampson-Russell’s new Middle East Office in Dubai.’s new Middle East Office in Dubai. � Bill Via has been named VP of Outsourcing and Training Services for Subsurface Consultants & Associates.. �

IHS Energy has named Bob Lockwood as MD of its restructured global consulting division. Wood-Mackenzie has appointed Ann-Louise Hittle as Head of Macro Oils in its Boston office.has appointed Ann-Louise Hittle as Head of Macro Oils in its Boston office. � A2D Technologies has appointed Peter O’Shea VP of International Business Development. � Energy Graphics has released a new version of its Intellex Report E&P data browser and reporting tool. More from energygraphics.com. International Datashare Corp. and Divestco have completed their merger into the new Divestco.com Inc.company. � Baker Hughes is to record $106 million after tax charges in the third quarter of 2003 relating to its 30% minority interest in WesternGeco – mostly related to impairment of the multi-client seismic library. � Landmark is to deploy its PetroBank solution in a new National Data Bank for the Kazakhstan Ministry of Energy and Mineral Resources.Neuralog has announced NeuraView—an entry-level visualization, editing and printing solution for digital logs and large image files.

Liv Mæland presented Statoil’s strategy for management of international data—advocating a single data instance and global accessibility. For successful remobilization of usable data, project storage is critical. “Remobilizing a project can not be based on luck, private contacts or investigative skills.” Statoil demonstrated real-time collaboration on the offshore Ireland Cong well between the Aberdeen data center, Dublin operations and a London OpenWorks database.

Shell

Kolbjørn Skjæveland described Shell’s global reorganization with standard processes, systems and tools deployed across five regions. A redesign of Shell’s European organization is underway based on ‘cross-border optimization, portfolio management and exploiting scale and standardization’. E&P information management vision includes a single set of applications and a unique data environment with central data stores served from Amsterdam. Approved applications include OpenWorks, Petrel and Shell in-house tools 123DI and Logic. The data environment leverages Petrobank, OpenWorks and an integration framework based on Schlumberger’s ProSource, PointSource and the new data transfer manager.

ConocoPhillips

Lars Gåseby’s showed how ConocoPhillips’ Norwegian Onshore Drilling Center (ODC) has moved a large chunk of its real time drilling decision making onshore. The ODC was used throughout 2002 on Ekofisk drilling operations. By supporting remote decision-making, the ODC enhances safety and operational ‘focus’ while reducing offshore administration, travel and costs. The ODC supports well planning, real-time drilling and reservoir navigation. A fiber link offers very high bandwidth between the ODC and Ekofisk and a 150MBit radio link hooks in satellite platforms. Data feeds include audio, video conferencing, rig cameras, smartboard and real time data.

Petrodata

Current PetroBank projects include a migration to Linux, NetApp Filer integration, a dev kit and management of reference data. The handover of Diskos operations to Schlumberger next year will mark the end of Petrodata’s 8 year involvement in the project. Gunnar Sjøgren traced the project’s history and achievements since 1995. All legacy projects are finished and data reporting procedures are being followed. A web based front end is now operational. PetroBank now holds over 16,000 wells and 65 Terabytes of seismic data. PetroBank is now ‘what we wanted it to be when we started’. New data is being loaded and it has ‘never been easier to get access to the data’ although PetroBank is not yet a tool for the G&G end user.

Syncrude, the world’s largest producer of non-conventional crude oil is to deploy Plumtree’s Corporate Portal, Collaboration Server and Search Server to support its 5,000 employees, partners and contractors. Syncrude has developed 150 web applications to streamline processes, enhance employee self-service and to provide executives with a real-time dashboard of production performance and the cost of Syncrude’s 10,000 daily transactions.

Kiosk

Syncrude reports that the portal has made back-end systems more accessible and easier to use, improving ROI on existing investments and reducing training costs. Syncrude also avoided ‘thousands of dollars’ in anticipated hardware costs by providing kiosk and remote access to the Web portal for plant and field workers rather than personal computers.

Daugela

Darcy Daugela, web services team leader with Syncrude said, “With Plumtree, we have been able to bring together the information that workers need, in a way that makes it quick to find and easy to use. Plumtree has been very popular with IT as well as employees for improving productivity, while reducing the cost of supporting multiple independent systems.”

ExxonMobil hosted the joint industry Geomodeling SBED Phase II steering committee last month. SBED is a process-oriented, stochastic modeling tool. Phase II sets out to incorporate reservoir scale heterogeneity by developing models which honor geologic rules. Shell, Statoil, and ExxonMobil showed how they are using SBED in their upstream workflows.

GeoStudio

Geomodeling demoed a beta release of GeoStudio including 3 deep-water modules. This software allows asset teams to assess the geological risk and its effect on permeability and porosity distribution.

Nordahl

Kjetil Nordahl (Norwegian University of Science and Technology) showed how a realistic near-wellbore model of a heterogeneous reservoir interval can be used to estimate the ‘true’ distribution of petrophysical properties. The technique links petrophysics and sedimentology and highlights errors associated with traditional averaging techniques. More from geomodeling.com.

IHS Energy has just released the 2003 edition of its World Petroleum Trends (WPT) report on E&P activity. Despite reductions in exploration, reserves and new discoveries have outpaced consumption.

Stark

IHS VP Pete Stark summed-up the long-term view, “Although new oil discoveries have not come close to replacing demand, the cumulative effect of discoveries plus reserves revisions has more than kept pace with liquids consumption during the past decade.” In 2003, confirmed liquid resources ‘discovered’ at the end of 1992 were 416 billion barrels, i.e. 26% higher than estimated at the time. Likewise, gas resources discovered at the end of 1992 were 2,300 TCF—a 36% upward revision.

Discoveries down

New hydrocarbon discoveries made in 2002 for the world outside North America amount to almost 6.6 billion barrels of liquids and 30 trillion cubic feet of gas (TCF). 2002 marked the second year in a row when new gas discoveries failed to replace production. Two giant discoveries (over 500 million boe) were made in 2002 - Kikeh—an oil discovery offshore Sabah in east Malaysia, and Tomoporo 9, an oil and gas discovery in Venezuela. Eight other discoveries are likely to exceed 200 million boe in India, Venezuela, Brazil, Bolivia, Nigeria and Vietnam.

What’s left?

IHS Energy estimates that, in 2002, remaining global reserves of liquids and gas stood at 1,153 billion barrels and 6,662 TCF, respectively. In 2002, reserves to production ratios (R/P) for liquids and gas now stand at 43 years and 68 years, respectively. World Petroleum Trends is available for purchase from IHS Energy—contact sales@ihsenergy.com.

IFP

The French Petroleum Institute has also released its annual report on the state of the oil service industry. Worldwide E&P spend increased by 13% in 2001 to a record $120 billion but declined by around 4% in 2002. The IFP expects a new record for worldwide expenditure for 2003—around $125 billion. The increase is due to sustained high oil prices, a better world political outlook and a relatively strong demand. The number of seismic crews operating worldwide continues to decline. The seismic market is still suffering from overcapacity and the main contractors all saw a decline in turnover.

Decline

The IFP estimates the worldwide expenditure on geophysical services and equipment at around $ 4.5 billion in 2002—a decline of 8%. The decline will likely be less in 2003 (about 2%—to $ 4.4 billion) but the outlook is bleak for 2004. Note that seismic acquisition and processing make up around 90% of geophysical expenditure. The IFP also notes the arrival of very competitive Chinese seismic crews which are changing the game for western geophysical companies. Contractors are all implementing restructuring programs with Western-Geco shedding 1,700 employees, Veritas 110, PGS (currently in Chapter 11) 250 and CGG 300. IFP attributes market share as follows; Western Geco, 32%, CGG et PGS 15% each, Veritas 10%, Input/Output, TGS Nopec et Seitel 3% each. Drilling likewise hit a record in 2001 with a $22 billion spend (up 33%) but this was down 11% to $ 20 billion in 2002. The IFP study is available (in French) on www.ifp.fr.

Fugro unit Robertson Research International has acquired UK-based SeiScan GeoData (previously the trading division of Petroscan Ltd.). SeiScan specialises in the conversion of hardcopy legacy E&P datasets (specifically seismic, well logs and maps) into modern digital formats, delivering enhanced data as interpretation ready output.

€ 1 million

The company employs seven staff and has a turnover of around € 1 million. Key employees have all agreed to continue with the company. With more than 15 years of software development supporting its regular seismic and well data conditioning services, this acquisition extends Robertson’s service lines in the management of oil company physical assets. SeiScan will be integrated into Robertson’s Data Solutions group and SeiScan will continue to trade as an internal division of Robertson Research.

Drilling equipment supplier Weatherford International has selected Riversand Technologies’ Product Content Management (PCM) solution to link suppliers, Weatherford’s internal business units and customers to streamline the management of content aggregation, enrichment and syndication.

Green

Weatherford CIO Steve Green said, “One of our biggest challenges is delivering product information to customers where and when they need it. The Riversand solution will offer on-demand product and pricing information in one central system.”

Automation

Houston-based Riversand’s PCM solution automates and streamlines product information flow and related business processes. These include cost-oriented, supplier-facing interactions and revenue-oriented, customer-facing activity.

Narahari

Riversand president Sashidhar Narahari added, “Weatherford’s product content contributors and subscribers can now manage content at the touch of a button. Syndicating product information on demand will make it easier and more efficient for Weatherford’s customers to do business with them.”

Pipeline data management specialist GeoFields Inc. has announced an upgraded version of its Overland Spread (OLS) Model for pipeline operators. OLS Model version 3.5 enhances the analysis of the impact of potential spills on High Consequence Areas (HCA).

Stream velocity

OLS Model features an improved data set for modeling stream velocity, product spill rates and whether a release could impact an HCA. A conservative ‘worst-case’ approach makes use of rates based on peak stream discharge using 90th percentile statistics.

Park

GeoFields director Nick Park said, “Customer feedback and the continuing upgrade of our datasets led to improved methods for addressing velocity measurements. With this upgrade, our clients are assured of the best methodology available for determining potential HCA impacts.”

Experts

GeoFields has consulted extensively with leading experts in the fields of surface water transport and engineering hydrology to establish the most valid and reliable methods for modeling hydraulic product transport.

The American Petroleum Institute (APl) has responded publicly to the US Minerals Management Service (MMS) for comments on its ‘OCS Connect’ e-government initiative. The API ‘strongly supports’ OCS Connect which will help industry/MMS interactions migrate from paper reporting to an online environment.

Funding

The API requested that OCS Connect Initiative be ‘adequately funded’ to insure that project goals are achieved. Industry has already invested hundreds of millions of dollars in e-business and it behooves the MMS to make the necessary investments for efficient interactions with industry.

Inconsistent

APl further requested that the MMS evaluate which data types are really required from industry adding that currently, much information requested is ‘inconsistent’ and ‘nice to have’ rather than mission-critical to the MMS.

AMEC Engineering, has awarded UK-based Tektonisk a £1.2 million contract for the provision of supply chain gathering, validation and handover software and services as part of AMEC’s activity on the $230 million Sakhalin 2 project.

ShareCat

Tektonisk’s ShareCat ProjectArena leverages information from the ShareCat Internet content repository to link information from manufacturers to plant engineering requirements. The software supports communication between project teams and vendors and assures a consolidated source of quality electronic documentation and information.

Mitchell

AMEC information manager Chris Mitchell said, “A complex and geographically dispersed set of equipment vendors, coupled with aggressive schedules led us to consider alternative ways to solve the problems of equipment information management. Also, the client demanded high quality information for efficient asset operation.”

Pestille

Tektonisk marketing director Paul Pestille added, “This is the first award of a major outsourced supply chain information management solution in the oil industry”.

JW Sewall has been quick to leverage the draft release of the ESRI ArcGIS Pipeline Data Model with the release of a Pipeline Data Management Extension (PDME) for ArcGIS. PDME combines the core functionality of ArcGIS with the industry-specific capabilities of Sewall’s pipeline analysis and reporting software.

Geodatabase

The PDME interface gives users the tools to view, analyze, edit, and develop reports from pipeline spatial and attribute data stored in a single-source repository or geodatabase. The software uses familiar, pipeline-specific semantics and nomenclature for database queries and other basic functions.

Centralized

PDME establishes rules and relationships that allow operators to store pipeline spatial data (imagery, graphics, and vectors) and attribute data together in a centralized geodatabase rather than in separate linked systems.

Applications

PDME provides an interface to ArcGIS and to Sewall’s high-end pipeline applications; covering alignment sheet generation, class location analysis, and MAOP, and high consequence area (HCA) calculation.

Internet SCADA specialist M2M Data Corp. has just released a new satellite-based ‘economy’ system. The new SCADA system combines narrow bandwidth satellite communications with M2M’s compact iAdaptor communications gateway, extending the reach of SCADA monitoring and control into formerly untapped US markets.

Wallace

Donald Wallace, M2M’s COO said, “By bundling key technologies with our turnkey service, SCADA-based remote monitoring and control is now attainable and cost-effective for a large industry segment that had previously been excluded from remote equipment- and device-tracking.”

European

M2M has extended its global reach by tapping into both the European satellite infrastructure and its own telecommunications and data facilities in the US. Use of open standards and the Internet allowed for a M2M Data Corporation to seamlessly tie together its geographically dispersed infrastructure for the new SCADA system.

Applications

Some of these newly targeted applications include remote meters and pumps, tank batteries, fleet management, HVAC rooftop units, compressors, security systems, traffic lights, weather stations and cell towers, to name a few. M2M was founded in 1997 and is now owned by Electricité de France and four US-based venture capital companies.

Digital Oilfield Inc. has added imaging to its OpenInvoice e-invoicing package letting operators integrate manually-submitted invoices into the OpenInvoice workflow engine. Invoices are scanned and subsequently join the electronic processing, routing and approval workflows.

Field tickets

Paper invoices and field tickets are scanned and routed to departments or individuals. After coding and approval, invoice information is uploaded to the operating company’s financial system for settlement. Digital Oilfield claims that OpenInvoice is the most widely used e-invoicing technology in oil and gas.

Quorum Business Solutions has released an enhanced version of its Quorum Land package. Quorum Land manages upstream land and leases and is used by 20 Fortune 500 oil and gas producers and operators.

User group

The new release (version 3.0) was developed in collaboration with the Quorum Land User Group. A new tool allows users to import, validate and upload land data acquired through data purchase or corporate acquisitions.

Weidman

Quorum president Paul Weidman said, “Under the ongoing direction of the Quorum Land User Group, our land management software continues to maintain its position as the most comprehensive integrated solution for land management in the energy industry.” Founded in 1998, Quorum now has over 130 staff operating out of offices in Houston, Dallas and Calgary.

Schlumberger is to sell the bulk of its Sema IT consulting unit to Atos Origin in a deal worth approximately $1.5 billion. Schlumberger acquired Sema early in 2001 for $5 billion (see Oil ITJ Vol. 6 N° 2). Schlumberger will retain a number of non-oil and gas specific Sema businesses which are scheduled for divestiture or IPO.

Gould

Additionally, Schlumberger will retain the activity of IT Services for the oil and gas industry. Schlumberger chairman and CEO Andrew Gould said, “Key amongst the techniques required to enhance hydrocarbon recovery will be the information technologies that enable real-time reservoir description, monitoring and management. Schlumberger has the fundamental IT knowledge needed and will address these opportunities through the expanded SIS unit that is part of Schlumberger Oilfield Services. The activities of this unit include technical consulting, information management and Exploration & Production (E&P) software augmented by E&P business process optimization and secure worldwide connectivity from sub-surface to desktop.”

Goode

Subsequent to the acquisition, Peter Goode has been named president of Schlumberger Information Solutions, replacing Ihab Toma who is now heading up the reconstructed consultancy business.

Sweetener

The deal was sweetened with the award to Atos of a substantial, multi-year outsourcing contract for the management of Schlumberger’s IT.

IHS Energy has acquired Luna Innovations’ iMonitoring unit for an undisclosed sum. Luna iMonitoring has developed a line of solar-powered, wireless sensing devices that will allow IHS Energy to augment its popular Web-based well-data collection system with the remote monitoring technology needed to make automation affordable for oil and gas operators of all sizes.

McCrory

IHS president Mike McCrory said, “Luna’s innovations will help us rewrite the economics of well automation, extending the benefits of daily well performance monitoring to the vast majority of onshore oil and gas wells.”

Ferris

iMonitoring CEO Ken Ferris added, “We have been field testing and fine-tuning this offering, tailoring it to the needs of the oil and gas field operator. By setting our sights on the economics of the average well, we’ve been able to significantly lower the costs of both installation and ongoing monitoring.”

FieldDirect

IHS is integrating iMonitor’s intelligent metering devices with its FieldDirect web-based data collection service. Field-testing of these technologies will continue as iMonitoring’s staff joins the IHS Energy team. FieldDirect brings ‘e-field’ type operations to stripper well operators. Engineers and managers can monitor wells and plan field operations from the office.

In a new study, Wood Mackenzie seeks answers to the question “Do upstream acquisitions add value and reserves?” The study, ‘Value Creation through Acquisitions’, analyzed 170 international deals done by 25 companies.

Black

WoodMac director David Black explained, “The 25 companies in the study group spent over $140 billion on international acquisitions between 1996 and 2002 - equivalent to around $180 billion in today’s money. Overall these deals resulted in a total value creation of $23 billion net of the acquisition expenditure.” Black commented “In absolute terms, the companies with the biggest acquisition spending, such as BP, Total and ExxonMobil have seen the largest value creation, much of it from the mega mergers that the industry has seen in recent years. Occidental is the top performer from the integrated and E&P company peer group, with BG, Talisman and Marathon also performing well.”

Marathon

Marathon and Talisman have managed to achieve a rate of return of nearly 20% on their acquisition expenditure. Another four companies have made a return on their acquisition expenditure of around 14% nominal or better.

Flunked

Nine companies flunked the WoodMac test, having acquired assets which are now worth less than their cost.

Oil price

Most interestingly, the study found that, taking all the deals together, a rising oil price accounted for around $25 billion of value creation in NPV terms. In other words, without the impact of the oil price there would have been little or no value created from these deals in aggregate. The full study is available from www.woodmac.com.