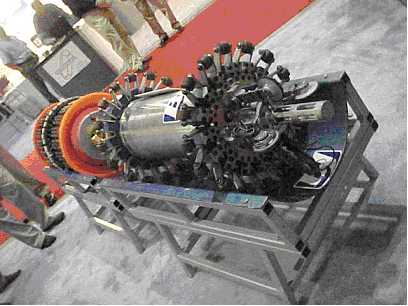

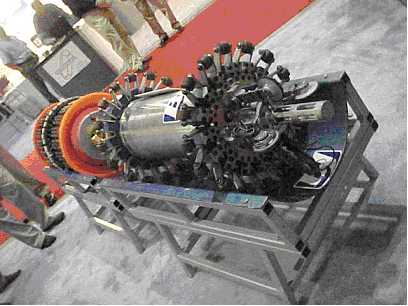

BJ Services Vectra Magnetic Flux Pig

BJ Services Vectra Magnetic Flux Pig

We gave Petrel ‘star of the show’ status at last year’s SPE ACTE and it appears that Schlumberger’s acquisitions department agreed! Schlumberger Information Solutions (SIS) has just announced that it is in the process of acquiring Technoguide’s software business—which comprises what is now known as Petrel Workflow Tools.

Grimnes

Jan Grimnes, Technoguide president and CEO said of the deal, “Through this combined offering, a much wider range of our clients’ personnel can build a model in a day and make updates in minutes. This takes reservoir modeling mainstream. Our clients can also rely on the support and consulting capability of the global SIS organization.”

Toma

SIS president Ihab Toma added “By complementing the SIS leadership position in seismic and simulation with the modeling and workflow management strengths brought by Technoguide, we will provide our clients with the ability to have rapidly updateable and dependable models on which to base their business decisions—the cornerstone of real-time reservoir management.”

Living Model

Toma continues, “Being able to continuously update the model brings the reservoir to life for fast, dynamic and complete reservoir management. We call this the ‘Living Model.’ Users of the Living Model have the unique capability to span the breadth of reservoir characterization—from seismic to simulation—and dive into the details when it counts. The ability to model rapidly, as well as perform risk and uncertainty evaluation, allows users at all levels to build dependable models and instantly update decisions with the latest information throughout the life of the asset.”

Competition?

Before the deal, Oil IT Journal learned of Petrel’s intent to continue its move into the interpretation suite space—with enhanced seismic and geological interpretation capabilities. Petrel was shaping up as a head-on competitor for SIS’ GeoFrame-based product line.

Comment

Not long ago oil companies were bent on ‘software rationalization’ for cost savings and efficiencies. Now, SIS has at least three tools for seismic interpretation alone—making for a seeming embarrassment of software riches for its clients!

Houston-based Ovation Data Services, Inc. (Ovation) is to purchase a majority interest in DPTS Limited (DPTS) of Orpington, UK. Both Ovation and DPTS provide data transcription services and digital asset management and storage systems.

Servos

Ovation president and CEO Gary Servos said, “The acquisition strengthens our European presence in the exploration and production industry and will enable us to expand into new markets. High quality customer service has always been our priority—with DPTS, we strengthen our ability to provide quality services utilizing state-of-the-art technologies as well as maintaining and supporting the plethora of older technologies.”

Rationalization

The deal brings a degree of rationalization to the difficult seismic transcription and tape storage markets. The DPTS name will likely be kept on—reflecting good brand recognition in the UK. DPTS was bought out by its management last year—see Oil IT Journal Vol. 6 N° 12. �

Traipsing around the tradeshows can bring some serendipitous insights to those who withstand the partying. This month’s tale is a foretaste of our comprehensive reporting from the twin conventions (Society of Petroleum Engineers (SPE) and Society of Exploration Geophysics (SEG)) which we will bring you in next month’s Oil IT Journal. But just to lift the veil a little I’d like to relate a moment of puzzlement I experienced while listening to the very grand presentation given in San Antonio by Schlumberger and Intel on a new joint development destined to revolutionize reservoir simulation.

IT stack

My incomprehension came from the fact that Schlumberger is an application software vendor and Intel is a chip manufacturer. They come from opposite ends of the IT stack—and I could not see what was in it for Intel (whose business model is to sell chips by the container-load) or for that matter, Schlumberger, whose flagship Eclipse application used to be so device independent that it would run on anything from a Cray to a toaster—at least that is how I remember the marketing material circa 1985.

Hoo-ha

The focus of all the marketing hoo-ha was of course the Linux cluster, and before you jump to the conclusion that Intel will be filling the containers with chips destined for cluster-based reservoir simulators, let me disabuse you. The particular nature of reservoir simulation means that a ‘cluster’ today comprises 16 CPU’s. A 32 CPU cluster will be available ‘real soon now’.

Eclipse

I pressed the folks on the Eclipse booth as to what exactly would be the program at the new Schlumberger-Intel research establishment—based next door to the Schlumberger ECL unit in Abingdon, UK. I asked what language Eclipse is written in. I was bowled-over to learn that Eclipse is written in that oldest of programming languages, Fortran! This was something of an epiphany for me in two ways. First I understood that the collaboration was not focused on redesigning the Intel microprocessor (that would really be the tail wagging the dog) but on tuning Intel’s Linux Fortran compiler. Second, it explained why the marketing department just had to steer clear of the real subject. What marketing person could possibly admit in today’s Java and object-oriented world that their software is developed in Fortran!

SEG

I left the SPE and headed on to the SEG armed with my little discovery but still wondering what all the Linux fuss was about. In Salt Lake City I ran into yet more Linux propaganda—from Landmark, Paradigm, Magic Earth (running on a one CPU ‘cluster’), WesternGeco—and listened to amazing tales of total cost of ownership and of performance. Seemingly virtually any upstream software runs 10 times faster on Linux than on Unix (but I though Linux was Unix). Indeed the one growth part of the geophysics business is the 19” rack full of Intel (or AMD)—based PC’s. Geophysical processing is truly amenable to clustering and some mind-boggling CPU counts—up to 10,000 at one location—were mentioned. But I digress.

Future computing

At the SEG’s afternoon ‘The Leading Edge’ Forum on Future computing, industry experts pontificated on where geophysical IT was heading in the next few years. Again, we’ll bring you our report next month—but for the purposes of this editorial, I must confess that after a couple of hours of listening to stuff about Moore’s ‘law,’ Linux clusters and Java I was moved to intervene. “What about the algorithms?” I blurted! This was met with more entreaties to use Java and C++. “What about Fortran?” I spluttered! This seemed to catch the experts on the hop. I concluded that the “F” word is generally considered inadmissible in good company. ‘Object-oriented, Java’ are the things to talk about—but generally, you are on safer ground if you stick with the whiz-bang of TeraFlops and MegaPixels rather than programming paradigms. This I find puzzling since it has been reported elsewhere that progress in computing, for instance in sorting algorithms or code cracking, comes almost equally from speeding-up the hardware and improving the algorithm.

Comp.lang.fortran

When I got back home I decided to do some more research on Fortran in action. I quizzed the Usenet newsgroup comp.lang.fortran (about as active a community as sci.geo.petroleum incidentally) and would like to hereby express my greatest thanks for their cooperation. I first learned that contrary to the received view, Fortran is not a ‘legacy’ language—but a performant, widely-used tool for writing numerical applications—such as reservoir simulation, seismic processing and meteorological, engineering, oceanography and nuclear reactor safety. Fortran remains popular because of its built-in support for multi-dimensional arrays and its portability (could this be why so many apps have popped up on Linux recently?). But most of all, Fortran’s success is due to its accessibility. Engineers and scientists have enough intellectual challenges in their core business to want to wrestle with such elementary features as arrays.

Crunch-crunch

A closing thought—just how popular is Fortran? My guess/contention is that Fortran code probably represents a majority of world-wide CPU clock cycles. At least those that are, as it were, computed in anger—we might have to exclude the zillions of near-idle PC’s, waiting for the odd mouse click. In terms of real number crunching, climate forecasting code that uses over a million CPU hours in one run takes some beating. So for that matter does a 10,000 CPU seismic processing cluster running round the clock.

The outsourcing bandwagon is gathering speed as companies realize that it’s often more cost-effective to cede control of certain functions to a specialist third party, letting them concentrate on their core competencies. Tom Peters’ business mantra that “you’re a fool if you own it” now reverberates in boardrooms the world over. Over the past half decade, in manufacturing facilities, management skills or software, the market for ‘renting’ business elements has grown into a multi-billion dollar industry. A move to outsourcing frees up internal resources to focus on winning and retaining business, provides more flexibility and offers a variable cost base. Companies get greater access to a wider resource base and more skill sets, not to mention the economies of scale derived from effective outsourcing.

Utopia?

In business utopia, this would ensure we all live profitably ever after. But things don’t work out like that. Any partnership depends on compatibility, with needs, culture, cost and other variables. What might be right for one may not be for others. The key is to strike an effective balance. What makes a successful relationship? To investigate this, lets take a look at two models—the ‘contract model’ and the ‘risk-reward’ model.

Contract model

A typical contract model for IT outsourcing involves a fixed-base charge for a defined scope. Objectives are set at the outset and the supplier is expected to deliver on time and to budget. As the market is continually evolving, the initial scope is likely to change. Any changes throughout the term of the contract are handled on a fixed unit charge basis. But this creates adversity and limits flexibility. It doesn’t leave much room for maneuver. Incentives may even encourage the supplier to increase costs and usually fail to improve services or evolve technology. This model can work, but is most likely to be used for ‘commodity’ services.

Commitment

But there is something lacking in the contract model. Outsourcing relationships should be more than just a contract. In a truly two-way relationship, there is an unspoken commitment to work through any hard or tricky issues that arise. A partnership based on trust produces win-win behavior and provides mutual benefits through the alignment of business objectives. An outsourcing relationship must be flexible in order to handle changes as the businesses evolves.

Risk-reward

An alternative outsourcing arrangement involves setting mutually agreed targets and incentives to encourage teams or individuals to exceed on their personal objectives. Good performance is rewarded and both teams are continuously motivated. An open book, or risk-reward agreement, where there is transparency in what both parties are doing, is far more likely to succeed than one where some elements are kept behind closed doors. In fixed-price contracts all savings become profit to the contractor. However, in target-based risk-reward, the savings and benefits are shared.

How it works.

An open, cost-based contract providing a basic set of services is agreed upon and a target cost with a base fee is estimated for the activity involved. As an incentive, share ratios are then negotiated for over-run and under-run of the cost target. Clients can also offer to pay additional incentive fees to encourage certain behaviors - for example, delivery in line with agreed SLAs, service and technology innovation, customer satisfaction, co-operation with other suppliers, benchmarked performance against market metrics and seamless delivery during transition.

Penalties

Often a certain amount of money is taken off the overall sum for every day over-target that a project runs late, although this type of contract doesn’t rely on penalties. In fact, neither party wants to enforce or incur them. That’s missing the point. They’re purely there for insurance, so any company who feels they are unable to hit targets should either negotiate harder or decline the contract.

Targets

For this model to work, it is important that annual target prices are set. For example, the target for Year 1 is based on the actual cost history and current budget information provided by the client and verified in due diligence. In taking this approach, it allows a much faster start in provision services than a typical contract.

Open book

The partnership model is based on open book accounting. This means the client is free to audit the account records and there are no secrets between the two parties. This is essential to success, and ensures that trust can be won by proving yourself in a totally transparent manner. The outsourcing scenario will also include meeting SLAs, customer satisfaction surveys, IT and business management reviews, overall quality, innovation, co-operation and risk.

ROI

Incentive-based (not just penalty-based) contracts of this sort are designed to minimize risk and maximize results. Good communications and sound working relationships are key. Knowing where both parties stand provides a solid basis from which to move onwards and upwards. In an unstable economic environment, this is as near to guaranteed performance and return on investment as you’re likely to get.

Taxonomy

Glenn Mansfield described Flare Consultant’s work for Shell Expro. As teams collaborate and work sequentially on data, Shell has found a need to ‘pass the baton’ effectively from one workgroup to another. Shell is defining best practices which are shaped by, and embedded in company policy, before being made accessible to all. A spin-off from this project is a new upstream taxonomy—implemented in conformance with the Dublin Core Metadata XML. This has been developed to associates concepts—so that a search for ‘PVT’ will bring up a list of related terminology for further search. The results of the Shell project were presented during two recent workshops hosted by POSC.

Global Information Links

Mike Craig (N. America Web Champion with ChevronTexaco (CT)) described how CT has standardized on an HP desktop and bought 32,000 units for world-wide deployment. All desktops are standardized so that a worker has the same software in Kazakhstan or in New Orleans. Users can add on and self-manage specific tools—all of which are tested by CT before acceptance. An estimated $50 million in support costs has been saved. The number of applications supported is down from around 5,000 to 1,200. Remote access is provided by NetGIL, a CT developed interface using Citrix MetaFrame. Smart card security is under development.

Real-time learning

Steve Abernathy is director of Human Resources with Halliburton Energy Services Group. Halliburton has a huge turnover in personnel. In 1998, 4000 were laid off while in 2000, 6000 were hired! With a deep offshore rig costing $300k per day, ‘on the job’ training is no longer an option so Halliburton has been developing simulators for tasks like coiled tubing drilling, and sand wash. Another project is ‘Chunk-IT’—described as 24x7 learning using a ‘blended model’ of instructor-lead work, virtual work and e-learning. Halliburton’s 28,000 online courses have saved $4 million on travel expenses alone. Thousands of hours of productivity have been ‘handed back to the organization’.

The e-station

Michael Williamson (IBM) described this pilot done for Shell’s US retail arm. The e-station connects Shell’s gas stations to a central IT site over VSA Hughes satellites for data exchange and credit card transactions. The e-station pilot tracks electricity use—spotting faulty air conditioning, or open fridge doors, and lights that go on too soon or off to late. Key technologies include IBM’s WebSphere, Silicon Energy’s Real Time database and application server, Echelon’s LonWorks Energy Management package, wireless system from Graviton and OSGI standard networks.

The Living Business Plan

Scott Reid (Schlumberger – Merak) described a project carried out for Unocal. Unocal used to perform volumetrics and reserve estimates using spreadsheets, linked to a diverse software portfolio and involving a lot of error-prone re-keying of data. Schlumberger Information Solutions stepped in with a workflow called the Living Business Plan (LBP). The LBP is a “complete foundation for the alignment of corporate goals throughout the organization.” For Shell, the LBP has refined the business planning process in SIEP, Oman, Nigeria and in the USA. Tools used include SAP and Merak’s risk portfolio management. Service offerings include customized workflows, infrastructure comes from LiveQuest and eMarketPlace.

Refinery IT

Nirmal Dutta (ExxonMobil (EM)) described how real time knowledge of what is happening at both ends of a pipeline is key to EM’s Cerro Negro heavy crude project in Venezuela. Heavy crude (8° API) is diluted with Naphtha and piped 320 km to a syncrude plant. EM’s Integrated Refinery Information System (IRIS) instrumentation accepts data from digital and analog sensors and offers voice and data integration. An ICIMS-compliant document management system and a CMMS—compliant maintenance system were linked to ERP from JD Edwards. Op Costs were lowered significantly as data was only entered once—and flowed from control system into applications.

AI for text searching

Michael Massey (Pliant Technologies) presented Pliant’s SourceWare which applies Artificial Intelligence to text search and reconstruction (on poor quality scanned forms). The technique is variously described as AI, fuzzy logic, an ‘inference engine’ and ‘beyond taxonomy’. Searching a dataset of 12 million medical abstracts for ‘kidney’ brought hits on ‘renal’ and other miss-spelt versions like ‘kideney.’

LINUX Seismic Processing

Jeff Davis (Amerada Hess) described how cost cutting has hurt Hess’ IT budget. The company still likes to do its own seismic processing – leveraging the talents of two PhD seismic specialists. Hess’ legacy code – now 18 years old – has been re-compiled to run on new Linux clusters. Paralogic helped out with Linux cluster-based IT. Generally, to survive cost cutting, Hess’ high performance computing team has been reduced to ‘skunk work’ – using old computers that were lying around – or buying second hand machines on e-Bay!

E-Business Security

Will Morse (Anadarko Petroleum) listed ways of hardening systems to make them resistant to attack by hackers. Mostly this entails keeping security patches up to date, turning off services you don’t use and proper management of passwords. Morse preaches in favor of defense in depth, “A firewall is just the start!” and advocates having one task—mail, FTP, etc. on separate, dedicated machines. A comprehensive solution to security is required but ‘folks are in denial.’ Morse cited IDC security officer John Gantz who observed “Despite 9/11, corporations see security as an IT function, not a business issue.” Education of managers and users as to the risks and remedies is the key. Everyone should be prepared—not for ‘if,’ but ‘when’ you are hacked!

This article is a shortened version of a five page report on the SRI Conference available as part of The Data Room’s Technology Watch Service. For more info on this, email tw@oilit.com.

Landmark Graphics Corp. has just released PowerView for SeisWorks, an integrated visualization and analysis tool that enables asset teams to view 4D seismic, multiple 3D seismic surveys and seismic attributes concurrently. The PowerView visualization environment enables SeisWorks users to interpret time-lapse 3-D seismic and multi-attribute data more efficiently.

Lane.

Andy Lane, Landmark’s president and CEO said, “Today’s seismic interpreters are constantly challenged to improve productivity in the face of continuously mounting volumes of seismic data, a growing variety of data types and increasingly complex reservoirs. Landmark continues to create innovative technologies like SeisWorks PowerView to help our customers meet these challenges.”

Concurrent

Landmark’s PowerView is built on the traditional map-viewing paradigm. Concurrent and interactively linked views of multiple data sets and attribute types enhance interpreter productivity and accuracy. Interpreters can view multiple reservoir images simultaneously, revealing geologic relationships not discovered in conventional interpretation workflows.”

Key Energy Services, Inc. (KES), described as ‘the world’s largest onshore, rig-based well service company,’ is to deploy software from Siebel Systems, Inc. and MDSI in its field operations. KES has selected Siebel’s eOil and Gas 7 and Mobile Data Solutions Inc. (MDSI) to consolidate its back office systems and workflow processes from 150 locations into a centralized solution. KES CIO John Hood said, “Using the advanced Web architecture of Siebel Field Service 7 along with MDSI’s Ideligo, we can provide service personnel with the real-time support they need to deliver rapid, high-quality customer service.”

Backer

Simon Backer, senior VP wireless services at MDSI said, “Our Ideligo solution delivers proven, certified integration and offers a highly scalable application to better meet our customers’ enhanced field service requirement.”

Anadarko Petroleum has contracted Landmark to host an integrated suite of seismic and geological software applications, utilities and subsurface data for a deep-water Gulf of Mexico project. This project represents one of the first times that asset teams will work together in real-time from different locations while working on the same data sets and applications hosted in a central site.

Emme

Jim Emme, Anadarko VP said, “The Gulf of Mexico is an important exploration area for Anadarko and we're continually searching for the best tools and technology that will help us unlock new discoveries. This new technology illustrates how we hope to dramatically improve the way we work together with our partners and share data across the industry in order to overcome the challenges of exploration.”

Accenture

Landmark and Accenture have cooperated on the real time asset management center—which was demonstrated in spectacular style at the San Antonio SPE ACTE this month.

Halliburton unit Landmark Graphics has announced the release of OpenWire – its new technology for moving real-time drilling data such as MWD/LWD, into OpenWorks. OpenWire uses the embryonic Wellsite Information Transfer Standard Markup Language (WITSML) to offer an open interface to data from any drilling service provider.

NPSi

Landmark acquired its WITSML technology from Houston-based NPSi. NPSi was contracted by BP, Statoil, Shell and other companies to evolve the original binary WITS standard into a modern XML format. XML promises greater portability, and flexibility in data transfer.

Statoil

Statoil senior system consultant Frode Lande said, “Statoil has been participating as a partner and test site for OpenWire during the entire development phase, and we find it to be a very useful tool. Seamless import of real time rig-site data into OpenWorks makes OpenWire an important component of the drilling work process. Because OpenWire is built on WITSML, it is a future-oriented tool and can be used against WITSML servers from any vendor—an important consideration given the multiplicity of service providers at the rig site.”

POSC

Custodianship of the new WITSML standard was handed over to the Petroleum Open Software Corporation (POSC) at the POSC fall member meeting in London this month.

The GITA GIS for Oil & Gas conference, held in Houston was well attended with nearly 600 registered and some 50 exhibitors. Two things are changing the way the US pipeline industry manages its data. Regulatory pressure is mandating pipeline inspection programs. These are designed initially to locate ‘high consequence areas’ (HCA) where there is a risk of significant environmental damage in the event of a spill. Operators are then expected to assess pipeline integrity using a variety of measurements—such as those available from modern ‘intelligent pigging systems.’ Ultimately, the locations of HCAs and the pipeline integrity will be used to design and implement a pipeline maintenance program.

GIS—the killer app!

The pipeline industry is a natural for geographic information systems. The traditional representation of a pipeline is the ‘alignment sheet’—a drafted document showing the route of the pipe on a map along with cultural information and engineering data. The alignment sheet has proved very amenable to computerization and many new programs are leveraging geographical information systems to the full. The computerized alignment sheet shows how GIS can truly qualify as the pipeline industry’s “killer app.”

Pipeline Integrity

Ed Wiegele (MJ Harden Associates) reports that, “Folks in the field are crying out for GIS-based information.” The basic idea is to be able to collect measurements into a central database and re-use it for different aspects of the business. Data should flow seamlessly in and out of the facilities data model to users. The alignment sheet of the past allowed a ‘canned’ view of 6 miles of information at a time. Now all the data is in the database. Ultimately, it will be possible to automate much if not all Department of Transportation (DOT) annual reporting. “Unfortunately there is more than one data model.”

Risk Analysis

Jerry Rau (CMS Energy) defined risk for the pipeline industry as the product of the probability of failure occurring and the consequence of such failure. Data visibility underpins the operator’s risk mitigation strategy. CMS uses ‘dynamic segmentation’ of pipes—risk is computed continually along the pipeline—a technique which is considered more representative than calculations performed on fixed-length segments. Bruce Nelson (Montana-Dakota Utilities Co.) outlined current and future regulatory pressures which are impacting operators. The Office of Pipeline Safety (OPS) is about to extend its pipeline integrity management program requirements to gas transmission lines. Geographical Information Systems will play a key role in such managing such programs. MDU’s GIS is extends the PODS data model with an ESRI GeoDatabase developed by James W. Sewall Co. The new gas rule “will make the liquids rule look like a child’s story book.” Along with traditional in-line inspections and high tech intelligent pigging, Nelson advocates interviews with field employees who have lived and worked on the pipeline for years.

Handhelds in field

Ed Wiegele (MJ Harden) had a full house for the popular subject of handheld computing in the field. Wiegele noted that ‘fixing’ data after the fact is expensive, so it is preferable to give tools to field workers to let them perform quality data capture on the job. A subset of the ISAT database is copied to the handheld to ensure that feature types, code lists and symbologies etc. are recorded consistently. Data volumes are ‘no problem’ - 1 GB of storage for a Compaq iPAQ costing around $400.

Pipeline Safety

Mike Israni of the Office of Pipeline Safety outlined the current legislation framework. Initially, the requirement is to determine the location of high consequence areas (HCA). Subsequently an integrity management program will be developed to identify and evaluate threats. The next step is to select assessment technologies, perform risk assessment and finally, to undertake continuous evaluation and integrity management. Part of the OPS’ effort has been to revitalize the National Pipeline Mapping System. This used to be a voluntary system but will become mandatory under the new legislation. A facet of the NPMS is the Pipeline Integrity Management Mapping Application—a web-based tool available to operators, federal, state and local government.

Risk Assessment.

John Beets (MJ Harden) showed how tornado plots and dynamic segmentation are used to characterize risk. The MJH risk assessment model is due to John Kiefner & Associates and is a prioritization tool to optimize spending on safety. Beets estimates that 80-90% of risk assessment effort is spent on data collection.

Sheet-less

Ron Brush (New Century Software) believes there are two, entrenched camps when it comes to the merits of the traditional alignment sheet (AS). On the one hand there is the ‘we don’t need it at all,’ GIS brigade – while others cannot do without it. There are ‘no sitters on the fence’. The AS is reliable and accessible—but update, distribution and search are problematical. Brush’s thesis is that as new technologies are deployed—offering increased connectivity to the field worker, the move to databased, AS-less systems is inevitable. Technologies to watch include wireless, Trimble’s GeoExplorer, WinCE, GPS and WAAS—wider area air navigation system for airplanes—offering metric accuracy. Other technologies which may facilitate an AS-less world include e-paper such as Xerox’ Gyricon and E-Ink. Brush polled the audience to see how many believed would be alignment-sheetless in 5 years time. 24 believed you will be able to go without AS in 5 years – but 54 think not.

EU Perspective

Edgar Sweet (Intergraph) offered a European perspective. The EU Gas Market changed following the 1998 Natural Gas Directive. This set the framework for free movement of natural gas, safety of supply, licensing for supply, transmission, storage and distribution. The directive intended to liberalize the EU gas market – an ambitious program for 4 years. There has been a lot of merger and acquisition activity as companies jockey for position in the newly deregulated market. This impacts the IT industry and argues in favor of enterprise-wide IT architectures, integrated applications, web and geospatial enablement. “GIS is an expectation”. A PwC study found that GIS was important in competitive differentiation, in facilitating outsourcing and supporting compliance.

Spill modeling

If there was any doubt as to the ‘killer app’ status of GIS in the pipeline industry it was dispelled by Nick Park’s (GeoFields) presentation on spill modeling. GeoFields uses a digital terrain model – obtained from maps, stereo photos, satellite data or LIDAR. Release volumes are computed as a function of terrain – with pooling in valleys, surplus volumes and pressure check valves. Hydrology data can be input or computed from elevation data. The results are plotted on a base map with population and geographical features using ArcScene and ArcInfo. By tracing spills downstream, high consequence areas at some distance from the pipe can be localized.

GIS middleware

German startup Ms.GIS’ CORE Pipeline GIS was used on the Baku Tbilisi-Ceyhan (NTC) pipeline. Ms.GIS is a kind of GIS middleware federating spatial data from disparate systems, using the OGIS standards. Ms.GIS interfaces with ESRI, MicroStation and Bentley—as well as with data in Microsoft Access or Excel etc. Ms.GIS claims to be ‘much faster’ than Oracle Spatial. Hitachi Software’s AnyGIS is also GIS middleware and it too leverages the OpenGIS ‘simple feature’ specification. AnyGIS V 2.5 was released this month and features an AutoCAD Map front end to data in other systems.

Small World – GE

GE’s SmallWorld for GIS-based asset management offers links to Oracle and SAP. A line sheet generation application has been developed in cooperation with West Coast Energy. Currently the data model is ISAT, but GE is working on PODS implementation which will be de-normalized for performance. SmallWorld also works with the OpenGIS specification. An OGIS compliant WebServices development is underway.

James W. Sewall Co.

The James W. Sewall Co. (JWS) has collaborated with ESRI on GIS software for maximum allowable operating pressure (MAOP) and HCA analysis using alignment sheets generated from the (PODS) database. JWS’ software includes ASGPipeline, an alignment sheet generator and a pipeline data management module, Class Locator and the MAOP calculator. Sewall released a PODS geodatabase two months ago but claims ‘database independence’ for its software. A JWS spokesperson told Oil IT Journal that “PODS is a natural progression of database technology.”

ProActive Group

ProActive’s Rapid Emergency Response System (R-ERS) determines which agencies and individuals are affected by a potential disaster. Written with ESRI MapObjects, R-ERS automates the production of emergency response reports and synopses from an HSE database. ProActive clients include ExxonMobil, PanCanadian, Conoco Canada and Marathon.

Map Frame

Map Frame’s FieldSmart software solves the synchronization problem for field operators by allowing operators to take all their data into the field. FieldSmart compresses a 40GB database down to 500MB. An intuitive interface on a ruggedized handheld device uses ‘gestual’ computing. A scrawled ‘C’ for center, ‘Z’ for zoom etc. The software makes data capture simple and fast for field engineers.

Visualization

Mark Hurd Corp.’s software provides impressive fly-through of a 3D terrain model with draped bitmap imagery. Leica Geosystems unit Erdas Imagine provides general 3D visualization software which has been used by BP for pipeline route planning in the HIVE visionarium.

This article is a shortened version of a 12 page illustrated report on the GITA GIS in Oil and Gas Conference available as part of The Data Room’s Technology Watch Service. For more info on this, email tw@oilit.com.

Databases are an essential tool for geoscience research and being created and populated in many diverse industry sectors, universities and geological surveys. While such databases are valuable tools, they are not in general exploited to the full because the scientific language standards implemented across different databases are rarely compatible. The dictionaries, thesauri or lexicons used are normally only developed to satisfy local needs. Inter-organizational and international communication is rarely considered.

Intrusive

It would seem desirable to develop and support inter-organizational and international communication which should provide significant rewards and savings both for researchers and in the commercial world. However, the various proposals that have been developed within a range of sectors have had limited uptake. In general, organizations put most of their effort into designing and populating databases and building applications. Proposals from external bodies for standards are generally perceived as intrusive and are rarely implemented.

Cost savings

Nevertheless there are logically common dictionaries that are repeatedly reinvented and repopulated across many different database systems. For example many geoscience systems contain dictionaries describing rock type, lithostratigraphy, fossil names, mineral names, chemical elements etc. The process of constantly reinventing such dictionaries prevents the sharing and exchange of information. It also significantly increases the costs of system design when such key elements have to be recreated and repopulated.

Symposium

At the 32nd International Geological Congress (IGC) meeting (ITALIA 2003) that will be held in Florence, Italy, next August, a Topical Symposium is being organized to discuss these issues. The objective is to bring together project managers, database managers and strategic planners from industry, universities, and geological survey organizations to share information on the status of dictionaries, standards and technologies used for geoscience data management and delivery. The project also sets out to initiate collaborative projects to work on reviewing existing systems, identify best practices and promote the use of preferred, publicly available dictionaries.

Named after a 14,000 ft. peak in the Rockies, ‘Antero’ is the latest release of Veritas’ RC(2) reservoir modeling package. The new release adds faulted frameworks, streamline modeling and platform independence to RC(2).

Bashore

Bill Bashore, VP software and technology development with Veritas believes that, “Homogeneous reservoirs are on the decline. Future hydrocarbon reserves will come from increasingly complex geologies. To better understand these challenging reservoirs, asset teams need software tools that are both sophisticated and user-friendly.”

Radical

Antero has involved a radical code redesign, making it more intuitive, reliable and easy to use—while substantially improving the performance of its modeling algorithms. In one test, where the previous release took one minute to simulate a million-cell model, the new code took four seconds. Another innovation claimed is the ability to quickly construct complex faulted frameworks within the reservoir modeling application. Bashore added, “Handling faults correctly — not breaking up the edges into stair step grid blocks or warping cell geometries—has been a technical challenge for all vendors. But we have finally solved the problem.”

Wilson

John Wilson, president of Veritas Exploration Services added, “RC(2) spent a decade building a global reputation for excellence in stochastic reservoir modeling. The Antero release offers mainstream users more vigorous solutions, sharper subsurface images, surprising ease-of-use and platform independence. With this software customers will dramatically improve their knowledge of complex, faulted reservoirs, and maximize production while minimizing costs.”

ChevronTexaco and Schlumberger have signed a multi-year technology development project to develop a ‘next-generation’ reservoir management solution. The focus is leveraging real-time oilfield sensor data from wells and facilities to improve oilfield management.

Puckett

ChevronTexaco E&P Co. president Mark Puckett said, “Our objective is to bring recent advances in information technology and production system modeling together in a valuable solution. This project will enable access to field information from real-time sensors enabling collaboration between field and office personnel for faster and better decisions on reservoir performance.”

Petrobras has chosen Landmark’s suite of drilling data management and drilling engineering software products and support services for use by its major drilling departments. Petrobras will be using the tools for planning, executing and analyzing its well construction processes.

Lane

Landmark president and CEO Andy Lane said, “The software will support Petrobras’ goal of improving efficiency and performance. We look forward to expanding our already well-established working relationship with Petrobras in the area of advanced drilling technologies and services. Some 140 Petrobras engineers have received training in Landmark tools including Compass, WellPlan and the R2003 release of DIMS(TM). Petrobras has also contracted with Landmark for technical support and consulting services.

Santos-Rocha

Luiz Alberto Santos Rocha, Corporate Technology Group, Petrobras said, “Petrobras’ decision to shift to Landmark’s drilling software goes hand in hand with our corporate goal of achieving excellence in drilling. Landmark will help Petrobras maintain its position as one of the top operators in deep and ultra deep waters. Through this strategic partnership, we hope to significantly reduce well construction costs and improve well productivity.”

Measurement while drilling is currently hampered by the technology used to transmit information from the drill bit to the surface. The traditional technique of pressure pulsing the mud column is limited to a few bits per second—or maybe a few seconds per bit! All that may change if new technology sponsored by the US Deptartment of Energy and co-developed by Novatek and Grant Prideco wins commercial acceptance. Using a special pipe string with an embedded electrical connection, IntelliPipe offers around a megabit of bandwidth while drilling. The signal passes from one string to another via an induction loop embedded in the joint.

Smith

At the SPE ACTE in San Antonio earlier this month, Mike Smith, Assistant Secretary for Fossil Energy proudly presented the new technology to the world. Smith recalled an earlier DOE funded development 25 years ago, when one of the agency’s partnerships with industry had produced a major breakthrough in drilling technology—mud pulse telemetry! A development qualified by Smith as “one of the crowning achievements of the joint government—industry petroleum research program.”

Major advance

Smith believes that IntelliPipe will be the next major advancement in downhole telemetry, allowing for data rates that support real time decisions to be made at the surface and executed at the drill bit. The ‘dumb’ drill pipe is now the backbone of a downhole digital network. Oil IT Journal quizzed some industry folks at the SPE. There is genuine interest in the new technology, but concern over price. Like a Rolls-Royce—if you need to ask how much IntelliPipe costs, you probably can’t afford it!

As revealed in last month’s Oil IT Journal, MJ Harden Associates has rolled out the first ArcGIS Pipeline Data Model. The joint development with ESRI was hailed as ‘a first step toward broad industry consensus on a common conceptual data model for the pipeline industry.’ The new data model leverages the Integrated Spatial Analysis Techniques (ISAT) pipeline data model – originally developed by the Gas Research Institute and now under MJ Harden’s custody.

Kicked-up dust!

But the new data model has kicked up some dust in the pipeline community. Readers of Oil IT Journal will be aware that there is more than one ‘industry standard’ data model. Indeed, at the GITA Houston conference, Montana-Dakota Utilities (MDU) announced the joint development of guess what—an ArcGIS pipeline data model based on the Pipeline Open Data Standard (PODS) data model. Oil IT Journal quizzed the PODS folks at GITA and learned of their surprise at the ESRI announcement. PODS are concerned that any ‘standard’ GIS-enabled model should have more input from the community at large and should be vendor independent. PODS claims to be “seeking consensus and vendor neutrality.” ESRI’s Andrew Zolnai issued a statement addressing the “confusion around the Pipeline Geodatabase schema.” The ISAT release was “done in an orderly manner, if more rapidly than expected, and with absence of malice.”

EU can play too!

Don’t worry though, there’s no reason why the industry should stop at two ‘standard’ pipeline data models. There’s already a third under development—funded by the good old European taxpayer! Check out the latest ‘Industry Standard’ Pipeline Data Management on ispdm.org!

Quorum Business Solutions Inc. has released a new version of its Quorum Land application, described as a ‘cost-effective and flexible’ solution for managing land information. Quorum Land, deployed by a number of leading E&P companies, integrates lease, contract, fee property and easement land data within a single application.

GIS

The new version incorporates a geographical information system (GIS) front-end and allows browser-based access to the data. The system offers reporting with technology from Business Objects and links to financial applications, including SAP and ORACLE, to streamline business processes and reduce manual activities such as filing, copying, faxing and mailing.

Moore

David Moore, Senior Landman with Occidental said, “The Quorum Land solution has significantly reduced the time required by our company to manage data input, research documents, share information and generate internal and external reports.”

Weidman

Quorum president Paul Weidman said, “Quorum Land was created with direct user participation and those users continue to actively work with us to ensure that the product remains a compelling solution for professional land managers.”

Standards

Quorum recently joined the National Association of Lease and Title Analysts (NALTA) and is taking part in NALTA’s land data transfer standardization efforts. More from qbsol.com. �

Introduced earlier this year by Calgary-based Datalog, WellHub, a centralized, internet-based well data hub, reports good initial take-up of the service.

Hammons

Lane Hammons, operations geologist with Spinnaker Exploration, said, “WellHub has simplified the distribution of wellsite data, streamlined operations, and saved time and money for Spinnaker.”

WITS

The WITS-enabled WellHub integrates drilling data with a communications link to the wellsite including confidential instant messaging and e-mail. A user-friendly interface provides for the automatic distribution of files to joint venture partners.

Real-time

Datalog’s WellWizard supports real-time viewing of drilling operations giving offsite personnel secure access to the same data as seen at the wellsite.

LWD

WellWizard integrates data from different sources such as logging while drilling (LWD), geological data and drilling data into a complete historical and real-time view of the well.

Scandpower is embarking on a new project—code named OPUS—to integrate Statoil’s PeTra multi-phase modeling tool with Scandpower’s flagship OLGA 2000 simulator.

Snøhvit

Multiphase technology and flow assurance are key to many offshore developments where satellite fields are tied-in to existing infrastructure. Hydrocarbons from subsea wellheads are piped to floating production facilities via extensive flowline and riser systems. In Statoil’s Snøhvit development, multiphase streams from remote gas and condensate fields will be transferred 200 km to onshore processing plants for subsequent processing and export.

Joint industry project

Statoil, Norsk Hydro and Shell cooperated on the PEtroleum TRAnsport (PeTra) project—a new generation multiphase simulation. The project has reached maturity and Scandpower has been chosen by Statoil to undertake the commercialization of the new simulator world wide.

C++

The new simulator will integrate improved physical understanding of multiphase flow, advanced modeling techniques based on C++, a new graphical user interface, updated process models and thorough verification against both experimental and field data. OLGA is licensed to most of the major oil companies and is used for conceptual studies, detailed design, and operational support for ‘the majority of all new oil and gas field developments’.

Schlumberger

Schlumberger chairman and CEO Euan Baird is to retire next February. He will be succeeded by Andrew Gould, who is currently president and COO. Gould joined Schlumberger from Ernst & Young in 1975.

EDS

T-Surf—the developer of the GoCad modeling software—is changing its name to Earth Decision Sciences. The name change reflects the expanded scope of the company’s application portfolio from subsurface modeling into decision support.

Scitech

Energy Scitech Ltd. has appointed Neil Dunlop to its main board as sales and marketing director. Dunlop was previously with Landmark Graphics.

Microsoft

Microsoft has hired Marisé Mikulis as Energy industry manager and senior strategist for enterprise solutions in the energy industry. Mikulis was previously with the now defunct Upstreaminfo.com.

CGG

CGG has donated its Geocluster seismic processing software to Scotland’s Heriot Watt University. Similar gifts have been made recently to US, French and Norwegian institutions. Geocluster comprises some 300 processing modules for depth imaging, converted waves, time-lapse seismic, stratigraphic inversion, fractures and reservoir characterization.

Baker Hughes unit Baker Atlas has signed a letter of intent with CGG to purchase its borehole seismic data acquisition business and to form a venture with CGG for the processing and interpretation of borehole seismic data. Independent borehole seismic data acquisition services have proved a hard sell in the past because of the stranglehold the wireline company has over wellsite logistics, instrument conveyance and general tendering.

Special studies

Although the heydays of VSP are behind us—downhole seismic is seeing renewed interest in special studies such as anisotropy analysis, crosswell tomography and AVO calibration.

Multi-level

CGG’s portfolio of downhole seismic instrumentation includes the SST 500 high frequency multi level tool and the MSR-600 slim-hole receivers. BA’s borehole seismic toolkit includes the Multi Level Receiver (MLR). Baker Atlas will roll CGG’s borehole seismic acquisition capability into its existing product portfolio.

Processing

CGG and Baker Atlas expect the new joint venture in borehole seismic data processing and interpretation to offer an improvement over what is currently available. The intent is to provide ‘boutique-style’ processing and to develop new end-user focused applications. The new venture’s primary processing centers will be located in within CGG’s existing processing facilities in London and in Houston and will be supported by Baker Atlas’ worldwide network of local geoscience centers.

Statoil has acquired three new Reality Centers from SGI. The high end visualization units will be used in Statoil’s regional offices. The company had previously acquired two units.

Real-time drilling

One innovative use for the visualization tools will be to provide real-time three-dimensional imagery of offshore drilling. On-shore personnel in the Reality Center will be able to monitor and control operations.

Onyx 3400

The installations will be powered by SGI Onyx 3400 graphical supercomputers, each with 16 CPUs, 32GB of memory, two InfiniteReality3 graphics subsystems and a two-channel flat display. SGI reports that 120 out of its 575 SGI Reality Center facilities around the world are installed at oil and gas companies.

SAP’s annual European conference SAPPHIRE took place in Lisbon last month and included several oil and gas case histories. According to SAP, its software is deployed in some 70% of all integrated oil and gas companies worldwide and there are over three hundred SAP employees dedicated to the oil and gas business solution portfolio.

myEni

Marco Casati presented Eni’s SAP-powered enterprise portal. MyEni, a joint Eni/SAP development, is designed to give simple and secure access to and management of information, services and applications. Eni’s existing intranet solutions have been rationalized into the new workspace which offers business applications and interaction between employee and company. Other functions include a customizable area ‘MyPages,’ a knowledge sharing function and news feeds. There are currently around 5,000 users of myEni – next year the system will be extended to 50,000 users worldwide.

Shell

Frans Liefhebber described Shell’s work on Transactional eProcurement. Shell’s eProcurement connects buyer’s and seller’s ERP systems for purchase order creation, change, approval, receipt confirmation and invoice matching.

Trade Ranger

The industry-supported e-business hub Trade-Ranger is at the heart of a variety of Shell’s eProcurement test configurations. 1100 users are registered with Trade-Ranger, with some 460 actively trading. A single data warehouse has been established with $23bn of aggregate historical spend. Nevertheless Trade-Ranger faces challenges such as an immature marketplace, competition from other public exchanges such as OFS Portal, catalogue content issues and different stakeholder business cases.

Surgutneftegaz

Rinat Gimranov described JSC Surgutneftegaz’s SAP-based lifecycle technical and financial asset management of pipelines, buildings and oilfield equipment. The Russian super major, with 85,000 employees needed close integration of legal, contractual, technical, and financial asset accounting. The solution integrates an ESRI ArcView GIS map interface with asset information in SAP. Assets managed include roads, buildings and equipment specifications, financials and scanned documents such as leasehold. The GIS records history of construction and allows access to technical and financial data throughout construction projects – managed in SAP Project Systems.

Marathon

Speaking at the US SAPPHIRE in Florida, earlier this year, Marathon Oil’s Gary Newbury outlined its major new production revenue accounting (PRA) system. Marathon’s production revenue accounting system links tools from Tobin, Landmark and SAP together to integrate production data with its financial systems for its US domestic properties. These comprise 8,500 operated well completions, 2,100 delivery networks, 30,000 royalty owners and 20,000 joint venture partners.

Renaissance

SAP’s new production revenue accounting package is interfaced with land and contract management software (Tobin’s Domain) and Landmark’s Oilfield Workstation (TOW). Newbury believes that such a game-changing implementation requires a systems integrator with a track record (PricewaterhouseCoopers was chosen) and great communication of the project’s scope and impact to management and employees. Marathon’s Renaissance project went live in January 2002 – in a big bang switch over. This was made possible by lots of testing of software, many dry-run conversions and substantial education of all concerned.

Startup Luna iMonitoring has developed a range of hardware sensors for oilfield operations to lower the cost of remote monitoring and control. The new products leverage lightweight communication paradigms such as WiFi spread-spectrum radio and economical low bandwidth satellite links. IHS Energy Group is collaborating with Luna incorporate the new sensors into its web-based data collection service Field-Direct. A 50% cost reduction relative to traditional SCADA automation methods is claimed.

McCrory

IHS Energy Group president Mike McCrory observes that, “Only 20 percent of active wells throughout the world are currently monitored or automated. Important well information is not being captured and consequently, operators may not be optimizing well performance. Today, engineers have to manage more fields and more wells, so that providing a cost-effective and efficient means to manage these wells is significant. The advent of inexpensive microprocessors and sensors will certainly pay dividends in the oil field. Our customers will know instantly when a well is experiencing problems, and will be able to tune their pumping systems to optimize performance and minimize downtime, which will enhance the bottom line much more quickly.”

Ferris

Luna CEO Ken Ferris said, “IHS’ web-based, field data collection service is the most innovative in the industry and we are excited to be helping take that service and the oil and gas industry to the next wave of automation innovation.” More on Luna in next month’s Oil IT Journal report from the 2002 SPE ACTE.