From text to GIS and back again (courtesy MetaCarta)

From text to GIS and back again (courtesy MetaCarta)

Mexican state oil company Pemex has awarded Schlumberger a $60-million, two-year contract for the provision of information management (IM) solutions to all of its upstream business units. The deal includes lifecycle information management services from Schlumberger’s Information Solutions unit and change management expertise from Sema.

Figueroa

Pemex’s Jose Luis Figueroa commented, “This contract will give Pemex’s upstream professionals access to petrotechnical, financial, production and operations data, allowing for better decisions and rapid analysis of new opportunities.” The project involves the capturing, digitizing, scanning and indexing of E&P information and associated physical assets, such as cores, fluid samples and thin sections. These will be consolidated into a ‘virtual repository’ deploying a ‘common data model’ that follows ‘well-defined standards using international best practices and a common set of procedures countrywide’.

Secure

Schlumberger will leverage its InfoStream family of customized information lifecycle solutions. Data types supported include post stack seismic, well log, wellbore, production, and interpreted subsurface information. SchlumbergerSema will provide consulting services—particularly centered on its ‘five-step’ business transformation methodology. Schlumberger local manager Jose Magela Bernardes added “The combination of consulting and systems integration expertise will help Pemex maximize the value of its data assets. The success of this type of project is highly dependent on the team’s ability to address all change management aspects from technical to people issues to enhance efficiency and enable more accurate decisions in a timely manner, while maintaining the integrity and value of data.”

UK-based GTS-Geotech has signed an agreement with Schlumberger Information Solutions (SIS) to deliver information management solutions to the upstream in the U.K. and Ireland. Privately owned GTS-Geotech supplies application support, data loading and conversion services. Darren Biggs, head of data management with GTS said, “The new relationship will enable the provision of high quality, cost effective solutions with an extended range of services and a single point of contact. Both existing and future customers will benefit.”

IM Solutions

Schlumberger Information Solutions will provide its information management solutions and delivery services and will team with Geotech to offer integrated technical data management services. These include data loading, transcription and project conversion. Under the terms of the agreement, the two organizations will continue to deliver services independently or combine their resources when it would best meet customers’ needs.

A frequent plea one hears at the tradeshows and in the press is for more spending on research and development (R&D). R&D, like motherhood and apple pie is assumed to be an unequivocal good—the more you spend, the better your industry and economy will perform. Such entreaties are usually received with the same enthusiastic acquiescence, but are rarely followed by any action. This is probably because it is easier to ‘entreat’ than to find the funds. Even if you have found some money—how do you decide where to allocate it?

Politicos

The truth is that R&D projects may or may not be money well spent. For publicly-funded R&D, adjudicating projects may be outside of the political paymaster’s competency. There is also natural tendency of the researcher to, well, research! Have you ever seen a research report that didn’t conclude that more work—and therefore more funding—was necessary?

Transparency

Making any sensible analysis—let alone recommendations—of research funding is fraught with difficulty. Different countries have very different ways of organizing and funding research. R&D performed in the commercial world may or may not be included in the statistics. What is classified in company reports as ‘R&D’ may or may not really be research. Reporting may be driven by considerations of taxation rather than transparency!

Halcyon days

I don’t know whether these pontifications come from observation of a changing world or whether they are the fruit of a lifetime of taxpaying. My own recollection of university days is of an elite few who ‘stayed on’ to do research as a kind of staging post before moving on to industry. This view of research was confirmed in Steve Lohr’s book GO TO (see our review on page 9 of this issue). Lohr describes MIT’s Artificial Intelligence lab as being a place where a hands-off approach (little research on AI was actually done!) led to ‘academic freedom, technological optimism and an anti-establishment culture’.

Consortia of one?

The best R&D—the most spectacularly successful—probably derives from a ‘consortia’ of one. One thinks of the almost mythical successes of IT pioneers like Vince Cerf’s who dreamed up TCP/IP in his garage. Or Tim Berners-Lee’s single handed invention of HTML and the World-Wide Web.

Cresson

Such freewheeling R&D contrasts with my own limited experience of EU-funded research. Erstwhile EU R&D supremo Edith Cresson’s alleged funding of ‘research’ performed by her dentist could be considered a worst-case of R&D ROI! But Cresson’s activity and the resignation of the Commission in 1997 may have served to mask a different issue—the quality of EU R&D.

Social engineering

EU funded research has a hidden agenda of social engineering attached to the qualification process. More important than a good idea is having the right folks on board. Various more or less obscure criteria are applied to R&D grant applications. The most obvious condition is that grants are in general made to cross-country groupings rather than to single organizations. Various other ‘engineered’ criteria will put funds in the way of projects that are near commercialization, or not, that can show commercial potential and so on.

Hodgepodge

The end result of the complex regulations can be to create, not focused projects with clear objectives, but a hodgepodge of specification-driven verbiage. The lack of objectives can mean that the universities, research organizations and other consortium members go about their business more or less as usual, untroubled by any real obligation to cooperate. The funding cake can be carved up and shared out. The end result can be a herd of cats!

Consortia

Industry-driven consortia may or may not fall into this trap. The follow-up of direct investment in research is in inverse proportion to its proximity to the point of application. But the ‘consortia’ preferred by industry are generally groups of oil companies funding a single vendor development. I suspect that this is a more successful model than the multiple R&D organization consortia preferred by the EU.

Girassol

A good example of focused R&D is Total’s offshore Angolan Girassol field. This project is of a complexity and scope that is reminiscent of the Apollo mission and will be receiving the OTC’s Distinguished Achievement award next month. Production from Girassol, like other cutting-edge engineering projects, required a kind of ‘just in time’ R&D. Work on the riser was commissioned, researched and developed to a very tight schedule. No time for hanging around waiting for inspiration as it were.

Seismic

I suspect that much of the R&D that has disappeared from the radar screen is actually alive and well—but has been moved so near to production that we don’t recognize it for what it is. The amazing evolution of seismic acquisition and processing technology over the last couple of decades market is paradoxical proof that R&D is still very much alive and well—even though the sector itself has been in serious difficulty for a decade.

Oil ITJ - What are Schlumberger

Information Solutions’ top-level objectives today?

Toma - To deliver on our vision of the

smart field—the next paradigm shift for upstream productivity. Euan Baird’s

1996 vision was of delivering a 15% percentage point improvement in recovery.

A global one percent increase in recovery equates to a year of production.

Oil ITJ - So how is the Smart Field (SF) going

to achieve this?

Toma - SF links the field and the office

more closely and facilitates concurrent analysis of field data and financial

information—making the right decisions in a timely manner. In drilling, this

might mean real-time collaboration with external stakeholders or with co-workers

in the Visionarium. All this requires secure global, managed access in and out

of corporate firewalls.

Oil ITJ - Where does Petrel

fit in?

Toma - The key software component of

the SF is the Living Model (LM). The LM holds the current best estimates of

reservoir characteristics. The LM allows for real time well path planning—no

more ‘blind drilling’! The LM includes seamless access to enterprise knowledge,

data and financial information—coupled to the Living Business Plan. Where does

Petrel fit in? The LM is the heart of the SF and Petrel is the heart of the

LM!

Oil ITJ - Schlumberger now has at least three

‘solutions’ for seismic interpretation alone - can we expect rationalization?

Toma - We can’t ask our clients to throw

away everything they have got! We have attempted to encourage rationalization

of our two seismic interpretation suites IESX and Charisma - but the camps are

entrenched!

Oil ITJ - But how do you

differentiate the UNIX tools from Petrel?

Toma - GeoFrame focuses on the high end,

seismic interpretation market, producing a high uncertainty, coarse model suitable

for refinement and further interpretation within Petrel.

Oil ITJ - Last year the

Petrel folks were going head-on with Unix interpretation systems—how can you

maintain this differentiation?

Toma - GeoFrame and Petrel are complementary.

GeoFrame provides multi-user UNIX/Linux tools for high data volume interpretation.

During and following reservoir delineation, the PC-based Petrel is more appropriate. Today’s

32 bit Windows-based PC is still limited to 2GB of memory.

Oil ITJ - If there is no

product retirement, how do you separate Unix workflows from Petrel workflows?

Toma - Our software offering comprises

three ‘pillars’. First is the GeoFrame-based enterprise solution. Next, Petrel’s

‘asset performance’ solution focuses on integration and working in depth. Finally

the simulation ‘pillar’ centers on ECLIPSE. We intend to share intellectual

property across the three pillars.

Oil ITJ - What about geological modeling?

Toma - Mapping and geological

tools will be available in both GeoFrame and Petrel. But Petrel is now our definitive

3D modeling tool - we made the acquisition to fill a hole in our product line

- and to gain market share - Petrel is N° 1 in 3D modeling and is showing incredible

growth.

Oil ITJ - How does all this integrate?

Toma - OpenSpirit is the key to

seamless software integration in SIS. Petrel was already deploying OpenSpirit

to link with GeoFrame successfully. In fact Open Spirit Corp. is doing well

- standing on its own feet - and it made a positive net from sales growth in

the first two months of 2003. No vendors will today deny the success of Open

Spirit.

Oil ITJ - What about enterprise IT?

Toma - Schlumberger Information Solutions

wants to be the enterprise integrator of choice. This is a tremendous growth

market for us. IM solutions are 20% software and 80% services. Leveraging third

party tools is also a strength for us—particularly through

SchlumbergerSema.

Paradigm’s latest release of Probe is designed to ‘reduce the guesswork’ in AVO analysis. A new Rock Physics module provides deterministic relationships for converting seismic data to well log properties. Tri-attribute cross-plotting further broadens Probe’s data analysis and interpretation capabilities and enables the explorationist to analyze relationships between attributes. Results can be visualized in VoxelGeo.

Vanguard

Paradigm’s Vanguard reservoir property generator has also seen enhancements to its ‘state-of-the-art’ inversion technology. Vanguard 3.3 expands inversion functionality with a new, multi-attribute, Neural Network inversion procedure for the prediction of well log data of any type at all seismic trace locations. A new Rock Physics module has been developed to expand Vanguard’s reservoir characterization capability. Paradigm is opening a new Visionarium in Houston next month. The installation sports an 18 ft. screen by Mechdyne and edge-blended Christie Mirage 4000 projectors.

Veritas unit Hampson Russell Software (HRS) has announced a new major software release of its seismic attribute and inversion suite. The software—the sixth ‘Complete Edition’ (CE6/R1) bundles HRS’ existing products to ‘increase technical value and enhance usability’. New features include anisotropic modeling, full three-term analysis within the AVO package and colored seismic inversion in the STRATA module.

Coffin

HRS EAME general manager John Coffin said, “This release adds new features and draws on the latest research to keep the Hampson Russell suite at the forefront of seismic reservoir characterization.” CE6/R1 now provides direct access to geophysical data with support for bricked and compressed Landmark formats. A direct link to GeoFrame well data is now provided and new OpenSpirit links provide direct connectivity to seismic data loaded in Charisma, IESX and Landmark from both the UNIX and PC platforms.

Midland Valley Exploration (MVE) is anticipating the arrival of low-cost 64 bit computing with the announcement of a new product line ‘NMove’. New micro-processors from both Intel and AMD will bring 64 bit computing to the masses. 64 bit computing removes one of the last barriers to desktop computing – the limitation of addressable memory. Todays Intel-based architectures are limited to 4 GB of memory. Tomorrow, with Intel’s Itanium chip or AMD’s Opteron, 64 bit computing will allow for a theoretical maximum address space of 18 billion Gigabytes!

NMove

The Linux operating system has already been ported to 64 bits Red Hat and SuSE Linux corporations respectively and a 64-bit edition of Windows XP is due ‘real soon now.’ MVE has announced a new 64 bit version of its structural modeling package – ‘NMove’.

Smallshire

MVE developer Rob Smallshire said, “NMove application will be ready for 64-bit Windows XP and 64-bit Linux on both AMD or Intel platforms from day one. The race is on to deliver 64 bit applications, which will bring down the costs of using large data sets and will impact risk by offering improved better data and better modeling provided by full 3D applications.”

Norwegian service company Petrodata is to provide hosted application software to the Norwegian Oil Industry Association OLF. OLF manages data trading on the Norwegian continental shelf and for DISKOS members with the PetroBank Master Data Store trade client.

Gustavson

Following a successful trial of a hosted version of the Trade module, OLF Trade operator Anne-Synnøve Gustavson said, “We're very satisfied with the service Petrodata has given us through the pilot. The ASP solution works perfectly for us.” Petrodata delivers the Trade client through the Petrodata Workspace. In a separate deal, Norwegian oil startup OER has awarded Petrodata a contract to manage its documents including incoming mail (physical and electronic), registration of index-data, scanning and distribution, and physical archiving services.

Elie Barr has been named president and COO of Paradigm Geophysical.

Eldad Weiss remains as CEO. Valerie

Scadeng has been appointed country manager of TGS group’s newly opened UK office.Houston

based Seitel Inc. has been removed from the New York Stock Exchange’s ‘Big Board’. �

JW Sewall has released ‘QTools’, a software toolkit for geospatial data

migration QA/QC.Petroleum Place

Energy Advisors has appointed Chris Simon as VP, Transaction Services. Simon

was previously with Grey Horse Energy Partners.Houston-based

E&P independent Anglo-Suisse will be using Magic Earth’s GeoProbe, to evaluate

its GOM acreage.Gaz de France will

be using ESRI’s ArcIMS in an upgrade version of its GIS-based CRM system GEOSIC.

C&C Reservoirs has released a new version of its geological analogs system—Digital

Analogs 3.0.eLynx Technologies is

to supply its ‘report-by-exception’ internet-based compressor monitoring system

to Optigas. Immersive VR vendor

Fakespace Systems is working with Xi Graphics on a new 3D driver for Linux.

GeoFields has released Facility Explorer 4.0, a decision-support database for

pipeline-related

information.

Michael Baker Corp. unit Baker Energy has signed a ‘multi-million’ dollar contract with BP America, Inc. for the provision of operations and maintenance services for its Mad Dog and Holstein oil and gas production facilities in the deepwater Gulf of Mexico. Under the agreement, Baker will develop operating procedures and implement a computerized maintenance management system for these two SPAR-design floating production facilities. Baker provides planning, assessment and optimization of existing and future operations activities.

Operations Dashboard

Baker Energy’s Operational Dashboard is a web-based asset management and tracking application for optimizing offshore oil and gas operations. The Operational Dashboard is used by BP and others to interface with Baker’s Accountability and Incident Management (AIMS) database. The Dashboard integrates disparate operation data sources in the fields of safety, compliance, maintenance management, people onboard, emergency management etc. BakerItrac is a vessel and helicopter logistics application that provides for web-based route planning and analysis tools for the hundreds of platforms that Baker operates in the Gulf. Both ITrack and the Dashboard are used on Baker Energy’s own field operations.

Asset management specialist MRO Software has joined the Organization for the Advancement of Structured Information Standards (OASIS), a not-for-profit e-business standards organization. According to MRO, the move underscores its commitment to the development and use of standards-based solutions for asset portfolio management.

Web services

OASIS produces standards for security, Web services, XML conformance, business transactions, electronic publishing, topic maps and interoperability within and between marketplaces. OASIS has more than 600 corporate and individual members in 100 countries. OASIS and the United Nations jointly sponsor ebXML, a global framework for e-business data exchange. Current OASIS initiatives include UDDI, CGM Open, LegalXML and PKI. Leading OASIS backers are IBM, PeopleSoft and Microsoft.

Battle

MRO software VP Jim Battle said, “We recognize the importance of delivering standards-based solutions that reduces the total cost of application ownership while providing interoperability in our customer’s application environment, without sacrificing competitiveness and business agility. OASIS president Patrick Gannon added, “MRO Software has demonstrated its commitment to open standards for heterogeneous Web services environments by its active involvement as a member of OASIS.” MRO’s flagship product, Maximo, is used by BP, ExxonMobil, ChevronTexaco and others to manage oil country physical assets.

Maurer Technology International (MTI) has just released its new ADAP bundle of software tools for drilling engineers. ADAP combines 16 of MTI’s programs into an ‘affordable and user-friendly’ package for Windows-based PCs. The programs were originally developed as part of various joint industry projects initiated by the Drilling Engineering Association (DEA). The DEA projects addressed R&D in the fields of horizontal drilling, casing wear, underbalanced drilling and coiled tubing Technology.

Galaxy database

All of the sixteen programs share data with MTI’s Galaxy

drilling engineering database – eliminating the need to re-key data for use

in other programs. A data-transfer module has also been developed to connect

ADAP modules to drilling databases such as Landmark Graphics DIMS. More information

from

www.maurertechnology.com.

ESRI founder, president and Geographical Information Systems (GIS) visionary Jack Dangermond gave the keynote address to the 2003 ESRI Petroleum User Group. According to Dangermond, GIS use is growing, driven by a ‘science-based approach to decision making and problem solving in industry’. Influences as diverse as a rising oil price, infrastructure vulnerability, pipeline regulations and IT standards are forcing widespread adoption of GIS. In the oil industry Dangermond cited Statoil (ESRI user for 10 years), Petrobras (Brazilian E&P National Data Store) BP Baku-Tbilisi-Ceyhan pipeline, Shell’s 3D Analyst for subsurface and reservoir visualization, Tobin’s coupling of disparate GIS and tabular data, Landmark’s auto-generated contours and others.

Arc 9.x

Looking forward to ESRI’s 9.x release, Dangermond revealed that this would focus on Web Services, distributed GIS, process modeling, cartographic databasing, time-variant GIS and 3D visualization. With ArcObjects all tools will be available at ‘object level’. Dangermond anticipates a standards-based environment allowing for loosely-coupled integration with enterprise GIS. As an example, a flight line may come from one data server, and weather from a different server perhaps with different refresh rates etc. While in the current Arc 8x release the focus is on ‘making maps’, Arc 9.x will let developers ‘build applications using services’. ESRI will offer support for web services in three fashions—Java (IBM/Sun), .NET (Microsoft) and ‘generic’, open source environments. Likewise, ESRI’s Java support comes in three flavors. Map Objects for Java is written in ‘pure’ cross platform Java and is compatible with BEA WebLogic, IBM WebSphere and Open Source JBoss.

Innovations

Another innovation in Arc 9.x is support for ‘long transactions’ i.e. the ability to disconnect a part of the GIS dataset, to take it into the field for data edit, and to repatriate the changes in a consistent and conflict-free manner. Very large raster datasets can now be stored in the Geodatabase and ESRI has been working with BAE and ERDAS on feature extraction. The advent of massive amounts of LIDAR data has led to extensions for terrain mapping with Triangulated Irregular Networks (TIN). Raster datasets will benefit from the new scriptable geo-processing tools. These allow for the computation of parameters such as a ‘remoteness index’ or to perform image processing à la ER-Mapper. Temporal modeling provides time-variant represent-ations for SCADA and control rooms.

ArcGlobe

Dangermond noted that GIS is moving into the enterprise and that the future for ESRI lies in standards-based GIS. Web Services are at the heart of ESRI’s future offering which promises greatly increased functionality. A dramatic highlight followed Dangermond’s presentation with a demonstration of ESRI’s ArcGlobe a ‘whizz-bang’ application designed to offer stylish demonstrations of 3D GIS data in the boardroom. Starting from a view from space you can display in the usual ESRI fashion various themes: satellite imagery, roads, cloud cover etc. A geographical region can be selected from a gazetteer, targeted and zoomed in on. But the innovation of ArcGlobe is that the viewpoint can then seamlessly change from birds-eye to a fly-through of a digital terrain model. A great toy!

Plans

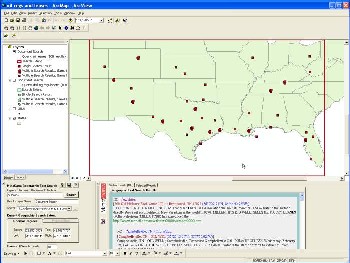

ESRI’s Clint Brown believes that the command line interface remains important to the GIS professional. So too are standards and Open Systems. ESRI now offers support for the ISO SQL/MM multi media (text, spatial and image SQL) standard and the OpenGIS Simple Feature model. Brown observed that database blobs support is now good and fast enough for image incorporation. A demo of ArcMap 8.3 showed how anomalies detected in pipeline pig surveys could be correlated with the grade of steel used in the pipe. ‘Error rules’ show where boundary conditions (for state or license contiguity for instance) are violated allowing for zoom in and edit. A ‘persistent state model’ in ArcSDE allows for ‘long transactions’—you can unplug a piece of the database for edit, then check it back in. A new network topology module allows spatial information in the Geodatabase to be viewed in both map and schematic forms.

Network image courtesy ESRI.

Network topologies such as pipelines can be visualized in the schematic with click-through to tabular data on features such as pipes and valves from the schematic. John Caulkin showed how raster maps of reservoir properties can be integrated intelligently with GIS and used for geoprocessing.

Migration

Occidental’s John Lineham gave a detailed cookbook for what needs to be checked and worked on during an ArcView Arc8 migration. There is a lot to do, maybe too much. Themes and symbology should be saved as AVL files and read in after migration. Avenue Scripts do not port. The Avenue conversion tool brings over 80% of the code but then hand tooling with Visual Basic is required. But many old Avenue scripts probably contain functionality which is built-in to Arc8 so check before re-writing code.

CAD-GIS Integration

Bryan Stoltenberg, (Blue Sky Development) believes that Computer Aided Design (CAD) data is “trapped in its own methodology and environment.” Likewise GIS is “a world unto itself.” But in pipeline engineering, these are forced to cohabit despite different philosophies concerning accuracy, coordinate systems etc. Stoltenberg proposes a platform-independent data representation, moving data definitions to a common, open format. Data elements thus defined can be linked to their original source via a unique identifier (UID). A projection manager maintains references between platforms, a common rendering system addresses objects resident in AutoCad, MicroStation and ESRI.

Enterprise GIS

Karl Fleischmann (ConocoPhillips Alaska) is a strong advocate of managing GIS data where it belongs—in the database. “Get out of shapefiles and into the data store—spatial is just ‘data’”. Spatial data should be centralized in Oracle, managed with automated processes and accessed in place from ‘shrink-wrap’ tools. ConocoPhillips’ Alaska Technical Database (ATD) manages technical and petro-technical data (including 900GB of image data) using ‘very robust’ security. The ATD uses an ArcSDE data store along with the production data sets. Unix cron jobs build spatial features (such as well deviation surveys) on the fly while ‘interlocking processes’ assure data integrity – for instance by recomputing formation tops.

Exprodat

Gareth Smith believes that GIS is the equivalent of the Moulinex food processor for the upstream. Exprodat has developed a ‘cookbook’ strategy for GIS deployment. The aim should be to provide a simple interface ‘for the masses’ first. These should offer ‘compelling’ content, leveraging high value corporate data and trusted external sources. They also should provide linkage to interpretation systems. Next the ‘back office’ support infrastructure can be established. Exprodat advocates using flat files and native raster imagery to avoid the cost and complexity of SDE. Problems were experienced with the ArcIMS generic Java viewer and Exprodat developed an ‘enhanced HTML’ viewer themselves.

IHS Energy

Tor Nielsen gave an insightful presentation on IHS Energy’s web services-based Common Architecture Project – a new IT architecture based on J2EE, Web Services and ESRI GIS Technologies. IHS Energy’s new web architecture will be a common source for graphs, reports, queries, maps, entitlements and authentication. The first web/GIS application, the GIS version of the IHS Energy Data Information Navigator EDIN-GIS has just been released. This ArcIMS HTML viewer (not Java implementation) is deployed with JavaScript for client side functionality. IHS Energy is working with Shell to provide 24x7 access around the world to its datasets “no more CDs!” A bespoke layer has been created to Shell’s specifications showing Shell’s partnerships and interests. Nielsen observed that cross-dataset query implies consistent or known units of measure (UOM) and nomenclature. “There are many matching issues – there is no easy answer”. Security across multiple clients’ firewall policies has proved problematical.

Open Source

On the economic front, IHS Energy deems Linux to be ‘very interesting’ although IHS ‘will stay with Sun for the next couple of years’. The price difference is however ‘too big to ignore’. Another sortie into Open Source territory involves trialing of MySQL, the Open Source database management system. Oracle is ‘very expensive’ for multiple offices and data centers due to its CPU-based licensing. ESRI is porting major pieces of code to Linux/MySQL. Finally Nielsen gave a thumbs-up to Apache Jakarta - TomCat and Jboss components which ‘can take you a fair way along the road.’

Landmark

According to Robert Warford, Landmark sank around $ 1 million in ArcView extensions notably for OpenExplorer. Then “along came Arc8!” Landmark is a big Unix/Java shop and selected MapObjects which offers “impressive lightweight, robust, quickly built applications. The network becomes the access protocol”. Map Objects are deployed at the top tier Surf & Connect Web edition. ArcIMS has brought seismic data visualization to the desktop and will be extended to other data types. Map Objects make Surf & Connect platform independent, running on Windows, Linux and UNIX. With MapObjects, maps become scale sensitive (more detail is revealed on zoom) and gridded data from OpenWorks can be displayed. Shell’s Bryan Prather demoed Surf and Connect web edition offshore blocks from Shell’s New Orleans server and well bore trajectories from Houston.

Marathon

Joe Kostecka (Marathon) and Darcy Vaughn (PetroWeb) provided an update on Marathon’s dual level portal deployment. Marathon uses several ‘second level’ vendor portals from Paradigm, Tobin and Landmark. All these are accessible through the top-level PetroWeb portal. GIS is a common interface to all data. Vaughn described how PetroWeb provides access to distributed data stores and how proprietary stuff like land positions remain within Marathon’s firewall. PetroWeb uses a ‘firewall friendly’ thin client browser offering easy deployment and satisfying corporate IT security. PetroWeb tried the ‘thick client’ (Java Map Objects) before abandoning it. ‘There is little which can’t be done on a thin client’. ArcIMS is the publishing solution for all GIS. This blends multi-source data at the desktop from around 40 data vendors with arrangements with PetroWeb. ESRI has given a ‘big kick-start’ to spatial data provision. Shape files are a thing of the past—data is now served from the spatial database.

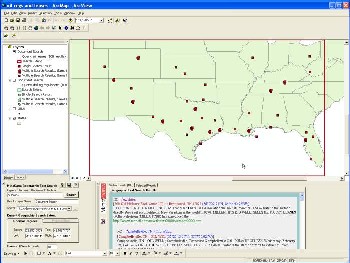

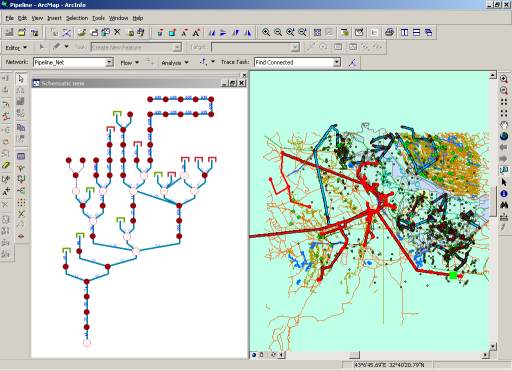

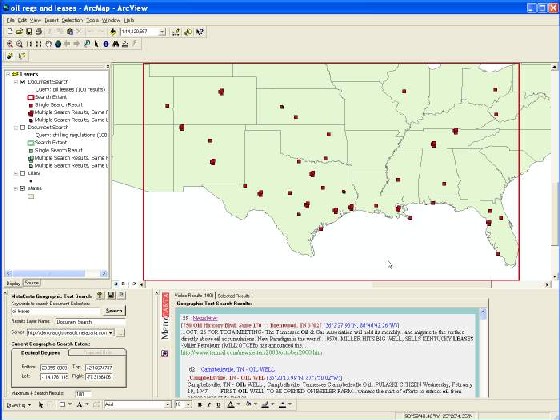

MetaCarta

GIS/Text Search - image courtesy MetaCarta.

MetaCarta’s GIS-constrained text searching technology (see below) gets our virtual ‘star of the show’ award. MetaCarta couples GIS and text by integrating a geographical place-name lexicon with a text search engine. Office and HTML documents are indexed by MetaCarta’s Geographical Text Search engine such that all place names recognized in free-text documents are spatially located. This then makes for interesting to and fro searching between text and spatially-delineated areas.

Geocoding

We spotted an interesting geocoding application from Paradigm (pdigm.com) Paradigm’s community awareness service uses pipeline centerline data to locate people living within the specified distance. This can save considerable postal costs over bulk mailings to the postal carrier route or to the whole zip code. Another example of innovative GIS use came from PBSJ whose corridor constraints analysis helps environmental workers permit oil and gas pipelines. R7 Solutions has just released GeoRoom—a front end to data in disparate datastores such as Finder and P2000. The software offers the casual data room user a single entry point to spatial data avoiding multiple interfaces. Amerada Hess is a user. GeoRoom uses Microsoft SharePoint Services and has hooks into FileNet (Hess’ document management system).

Tobin

Tobin’s Vendor Data Index allows users to search data from multiple vendors from a single map interface and to overlay and compare with in-house data. Information on data located though the WebViewer can be listed in terms of header information and spatial representation. Data types include seismic, well logs, production and other Tobin Data.

MJ Harden

MJ Harden has developed a new field data collection tool for pipeline engineers and is working with ESRI on pipeline-specific schematics. ArcPad is now embedded in MJ Harden applications.

This article has been abstracted from a 24 page illustrated report produced as part of The Data Room’s Technology Watch service. For more information email info@oilit.com.

Steve Lohr’s book ‘GO TO—Software Superheroes’ is a treasure trove of anecdotes and insight into programming from the dawn of time—i.e. circa 1950 to the internet age. Today we take for granted the fact that by dragging and dropping a few controls, or by cobbling together a few lines of code, we can make machines do wondrous things. Back in the 1950’s things were very different.

FORTRAN

It took true software superheroes to both design the language and write the first FORTRAN compilers. At the time, the majority of machine code hackers doubted it would be possible to have a machine write their carefully crafted byte code. control into an application. There was universal surprise when John Backus’ ‘high level language’ actually ran pretty well as fast as machine code. Incidentally, IBM’s FORTRAN was openly distributed without charge.

IBM 360

IBM was pretty much in the programming driving seat through the 1960s and was first to experience trouble as machines and compilers grew in complexity. The IBM 360 was a $500 million ‘software morass’ before it emerged as ‘one of the business success stories of the postwar era’.

UNIX

The IBM 360 narrative thread leads to the next superhero—Ken Thompson—who introduced a distinctly sixties ethos. UNIX was developed in part as a reaction to IBM’s ‘authoritarian technocracy despised by so many students.’ Thompson studied the 360 manual (while driving down the freeway from Berkely!) and realized that much could be done to simplify the developer’s task. One big breakthrough came when Doug McIlroy implemented UNIX ‘pipes’—designed to ‘connect programs like garden hose’.

BASIC

GO TO gets behind the scenes of compiler development—for instance, Tom Kurtz and John Kemeny of Dartmouth College were inspired by C.P. Snow’s seminal work “The Two Cultures”. This convinced them of the need for programming to be part of the liberal arts curriculum—thus was BASIC born.

Office Automation

The tale of Microsoft’s rise to fame and fortune begins not with Bill Gates—but with Charles Simonyi, a Hungarian hacker who cut his programming skills on a Russian Ural II computer. After fleeing Hungary, Simonyi gravitated—like many others to the IT Mecca that was Xerox Palo Alto Research Center (PARC) where he worked with embryonic WYSIWYG editors. A peek at Dan Bricklin’s VisiCalc convinced Simonyi of the potential of what we now know as Office Automation. Subsequent meetings with Bill Gates made the rest ‘history’.

Successes

GO TO includes accounts of the births of Java, C++, Tim Berners-Lee’s HTML and the World Wide Web. GO TO offers real insight into what makes a computer language—from BASIC’s unlikely success to worthy failures such as ALGOL . Good design is not enough—languages must perform and satisfy real-world developers. A poor implementation can become a world beater given the right combination of timing and marketing! GO TO is full of forerunners of today’s technical and commercial debates.

Wilkes

All in all, GO TO manages to be simultaneously erudite and entertaining. Read it if you are interested in how we got where we are today, and how little is really new under the IT sun.. Talking of which, Cambridge University IT pioneer Maurice Wilkes observed that “it was not so easy to get a program right first time and that a good part of my life was going to be spent finding errors in my own programs”. Thus was the gentle art of debugging born—back in 1949!

GO TO—Software Superheroes, Steve Lohr/Perseus Books 2002. ISBN 1 86197 243 1.

Since we last reported on the Public Petroleum Data Model Association’s (PPDM) ongoing Spatial Enabling project there have been some changes. We understand that the close-coupling of the PPDM database with ESRI’s Geodatabase has been abandoned in favor of a more vendor-independent approach.

Linear referencing

3D Spatial data for objects such as deviated wells and seismic lines are stored in the Spatial II project using the concept of linear referencing. This allows a single spatial geometry set to be created for a well and any attribute can be accurately spatially positioned through its measured depth.

Geodatabase?

Oil IT Journal understands that PPDM took a close look at the Geodatabase and found it wanting in two key areas—its inability to leverage compound keys (widely deployed in the PPDM model) and its limited support for reference values. PPDM spatial data is thus decoupled from the particular GIS technology deployed.

International Petrodata

One enthusiastic user of the PPDM Spatial techniques is Brad Dick of International Petrodata (IPD). IPD has successfully spatially enabled its well, pipeline and other databases using PPDM’s methodology. Dick observed, “Providing data to our clients from a PPDM database using ESRI’s Spatial Data Engine (SDE) allows access to our data in a standard data model from the GIS tools our clients know”.

Schlumberger Information Solutions (SIS) and UK-based Foster Findlay Associates (FFA) have integrated seismic image processing technology into SIS’s GeoViz seismic visualization tool. FFA’s SEA 3D (Seismic Engineering Application) extracts structural and stratigraphic characteristics from seismic data volumes. SEA 3D is based on FFA’s image processing and analysis (IPA) technology.

GeoViz API

FFA has leveraged the new GeoViz application programming interface API to process seismic data volumes loaded into GeoViz. The results can be visualized in a 3D view. SEA 3D offers quick-look investigation of sedimentary environments, trends and geometries at both field and basin scales. GeoViz offers seismic data volume roaming and cursor visualization à la Magic Earth. SIS claims to have over 2500 GeoViz users throughout the world.

Knowledge Systems Inc. (KSI) recently announced sales to three major oil companies. KSI is to supply its Drillworks/Predict (D/P) geopressure analysis tool to Shell for integration into the global Shell Software Suite. D/P geopressure analysis can be performed before or during drilling and is used to predict geopressures and improve drilling operations. The deal includes ongoing training for Shell’s drilling engineers.

Statoil

In a separate deal, Statoil reported take-up of KSI’s software. Statoil drilling engineer Jan Skagen said, “KSI’s GeoStress technology will be used to compute rock strength and stress conditions, perform wellbore stability analysis, and to fine-tune mud weights and casing depths.” Reporting on its use of KSI tools in the past, Pemex engineer Jorge Mancilla said, “Pemex uses state-of-the art technology for well planning and has been a Drillworks client for three years. We use the software to estimate pore pressures and fractures gradients from seismics.”

Texas Computer Works (TCW) is to offer a new production evaluation application, ProdEval, via the Internet. ProdEval will be available from the PetrisWINDS NOW! application services provider (ASP) platform. ProdEval combines production management with economics for cash flow analysis. ProdEval imports production data through TCW’s own data collection system, SCADA, direct entry, public data sources or internal corporate data stores. ProdEval can be used to forecast future production rates and reserve life and can export financial data to accounting systems.

Eversen

TCW president Harold Eversen said, “Efficient production information management separates the more profitable companies from the rest. Without a good system in place, real opportunities to increase stockholder value are lost.”

Petris Winds features vendor neutral, on-demand software to meet the different business needs of a user organization. ASP services can be provided via the Internet or as an in-house managed solution behind a company’s firewall.

A new major release of de Groot-Bril Earth Sciences d-Tect seismic interpretation tool has just been released. d-Tect v1.5 features enhanced visualization capabilities including support for SGI systems. d-Tect leverages Statoil’s object detection engine to deliver chimney cubes, fault cubes, median-dip-filtered cubes and other data volumes.

Ark CLS

d-Tect accesses data in OpenWorks and GeoFrame via Ark CLS’ IdealLink. The latest release includes volume rendering, random lines, improved color and opacity editing and other enhancements. More from www.dgb.nl.

Last month BP made significant updates to its corporate website including new interactive data views. BP provides online transcriptions of its presentations to analysts and now is offering surfers the chance to customize their interface to financial and HSE data in a new push for greater transparency.

Investis

New customizable interactive charting from UK-based investor relations specialist Investis leverages Microsoft Active Server Pages and signed Java applets to serve numbers from BP’s financial, environmental and social performance. Data can be analyzed directly online, ‘eliminating the need for spreadsheets and chart wizards’. The interactive analyst tool also lets users track BP’s share price and access the regulatory filing service. One wonders where all this will lead—when for instance will companies be offering online access to daily production data?

Tecplot Reservoir Simulation (RS) extends the functionality of Tecplot, Amtec’s flagship plotting software. Tecplot RS can load and display data from Schlumberger’s Eclipse and FrontSim or ChevronTexaco’s Chears simulator. Multiple variables for any entity (such as a well, injector, or field), or multiple entities for any variable can be plotted. Multiple plots can be made on a single page.

Streamlines

Other pre-built displays include completion profile plots (variables versus completion), 3-D color-flooded grid plots with wells, bubble maps, or streamlines. Data from multiple data sets can be compared and access is provided to generic Tecplot functionality for data exploration by probing, slicing, blanking, and creating iso-surfaces. Tecplot RS pots may be animated, automated, exported to the Web, or printed to publication quality. Tecplot operations can be automated by integrated macros that may be accessed interactively or in batch mode.

Landmark has just renewed its worldwide technology agreement with BP. The new three year agreement includes a range of Landmark’s integrated geological, geophysical, reservoir simulation, drilling, well engineering and project data management software. Landmark will also continue to provide technical support, training and consulting services.

Peacock

BP VP Steve Peacock said, “Landmark’s integrated software forms a key part of our work processes across petrotechnical disciplines and all parts of the value chain.” BP and Landmark have been working closely together in developing advanced 3D and 4D visualization and interpretation, interpretive seismic processing, petrophysical analysis and dynamic reservoir modeling applications that enable improved drilling success and enhanced production from existing fields. Landmark president Andy Lane added, “This demonstrates the value that Landmark technology brings to BP’s business and the results obtained by combining BP’s E&P expertise with our integrated software.”

Fugro-unit Jason Geosystems has licensed its strangely-named “3DiQ” technology to ChevronTexaco. Jason’s 3D integrated Quantitative Reservoir Characterization software algorithms make up the core of Jason’s Geoscience Workbench. The deal comprises modeling and inversion tools and Jason’s EPlus analysis and visualization front-end. Recently-introduced Linux and parallel processing capabilities will be used to ‘expedite analysis in larger survey areas’.

Calgary-based electroBusiness has signed an agreement with service-sector grouping OFS Portal for the provision of a standards-based electronic transaction infrastructure. The deal will provide a framework for members of OFS Portal to transact electronically with trading partners. OFS Portal members will be able to exchange electronic documents with their clients using electroBusiness’ e-Business Utility platform. “We are excited to be working with OFS Portal to support their members’ electronic invoicing processes and to further promote the PIDX standards. This agreement represents a great opportunity for electroBusiness, and the member companies of OFS Portal,” said Cal Fairbanks, CEO, of electroBusiness.

Ownership

The agreement further seeks to clarify ownership of transactional data which is confirmed as the sole property of OFS Portal members and their clients. OFS Portal has been instrumental in the development of the industry-wide electronic classification and transaction standards through the Petroleum Industry Data Exchange (PIDX) Committee of the American Petroleum Institute. The parties will continue to cooperate on developing and supporting industry-wide electronic data standards. Bill Le Sage, OFS Portal CEO said, “This deal advances e-business for the members of OFS Portal and electro-Business by streamlining the payment cycle, improving productivity and reducing costs”.

At the ESRI PUG, Schlumberger was showing off its latest GIS-based data access tool ProSource. ProSource, a high end GIS data-management focused tool, and its web-based PointSource version, will be available for general release shortly. PointSource leverages ESRI’s ArcIMS distributed GIS which has been extensively tested in SIS’ IndigoPool acquisition and divestment portal. ProSource ‘browses and manages information in multiple distributed repositories’. The ProSource interface can be customized to display multiple data types and to integrate users’ workflows. Information management professionals need to quickly and easily find, create, edit and manage data in these disparate information sources.

Integration Engine

ProSource leverages new technology developed by SIS – the Schlumberger Integration Engine (SIE) which provides a common integration framework supporting common data access and meta-data definitions for multi-domain, multi-datastore access and management. SIE deploys web services-based user management for single sign-on, security and entitlement. Abstraction layers provide configurable integration with various enabling technologies, web servers, application servers and portal technologies. The SIE promises a ‘straightforward way to configure access to information in any data store, relational or non-relational’.

Calgary-based Petro-Soft reports the successful completion of a two year project to replace ExxonMobil’s legacy production reporting system. Petro-Soft’s Phoenix reserves management system has now replaced systems employed by the corporation’s predecessor companies. The Oracle-based reserves management solution was used by ExxonMobil to compile and report its 2002 global reserves replacement figures. ExxonMobil’s requirements included internal and external reporting, global units of measure conversion, historical data migration and the ability to work on stand-alone PC’s as well as on the corporation’s worldwide distributed database network.

Knill

Petro-Soft president Colin Knill credited the success of the project to ‘cooperative development’ saying, “Through our study of ExxonMobil’s exacting reserves management and reporting requirements, Phoenix has been designed as a flexible tool that meets global reserve tracking and reporting needs, and which can be easily customized to meet future needs”. After two years of development, Phoenix was rolled out in August 2002. The project included enhancements to base system, testing, historical data migration and data loading. More from www.petro-soft.com.