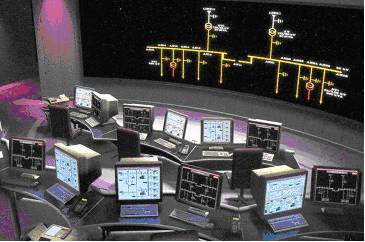

SCADA control room (courtesy Invensys)

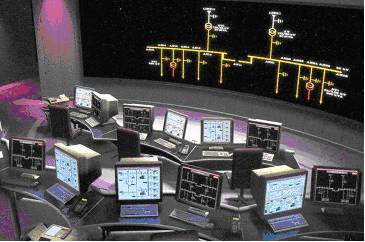

SCADA control room (courtesy Invensys)

2002 produced a mixed bag of results for upstream service companies. For the majors, Halliburton and Schlumberger, upstream IT financials are somewhat masked by reporting from the behemoth’s other activities. However, results from the smaller software houses and patchy reporting from the major’s IT sectors suggests that software is a more robust business than construction or consulting.

Schlumberger

Schlumberger Oilfield Services Revenue for 2002 was $9.3 billion, 5% down from 2001. No specific on earnings from the Information Solutions unit – but a couple of interesting historical tid bits in the notes—Schlumberger paid $18 million cash for Inside Reality while the price paid for Petrel turns out to have been a modest $8 million cash plus $ 60 million in Schlumberger stock.

Halliburton

Energy Services Group revenue for 2002 was $ 6.8 billion - down 12% from 2001. Again, no hard numbers for the software sector—but Landmark’s yearly operating income increased over ‘100 percent compared to 2001’ and quarterly ‘over 150 percent sequentially on increased revenue, primarily in software-related sales’. Both the revenue and operating income results were the highest in any quarter in Landmark’s history.

Baker Hughes

Revenue for the year 2002 was $5,020 million, 2% down on 2001. Baker Hughes bought back and retired approximately 500,000 of its own shares during 4th qtr 2002.

Veritas

Veritas’ second quarter (ending 1/31/03) showed revenue stabilized at a ‘relatively healthy’ level considering the current market environment. During 2002, Veritas acquired Advanced Data Solutions for $8 million and bought back $9 million of its own stock.

Core Lab

Core Lab reported record quarterly revenues of over $ 100 million. Reservoir Management posted its most profitable quarter in over three years, the result of ‘refocusing on proprietary, integrated reservoir solutions’.

TGS-NOPEC

TGS-NOPEC posted net revenues for 2002 of $ 124 million—down 3% from 2001. The A2D subsidiary was declared ‘profitable for the year including the cost of goodwill amortization’.

Speaking to analysts earlier this month, PGS CEO Svein Rennemo described 2002 as a “challenging and turbulent year” with continued over-capacity in seismics and the termination of the attempted merger with Veritas. Rennemo noted an “unfavorable trading environment” with a 6% decline in seismic sq. km. and a 12% streamer count reduction.

Legal protection?

Rennemo stated that PGS was involved in ongoing discussions with its creditor banks and bondholders to “realign its debt burden to debt capacity”. Rennemo added that “legal protection may be necessary and effective for full creditor alignment.”

Suspended

Immediately following the conference call, PGS’ American Depositary Shares were suspended from the New York Stock Exchange. These have been below the NYSE’s minimum security price over a consecutive 30 trading day period. In view of PGS’ earnings announcement, the Company has now also fallen below NYSE continued listing standards.

When we started out producing Oil IT Journal’s ancestral Petroleum Data Manager way back in 1996, the material that constituted the newsletter was basically anything we could lay our hands on. What you saw was what we got! Today, as the Journal gets better-known, we receive input from many different sources. We also now know where to look and who to talk to for news and views. Our workflow has evolved from monthly scraping of the barrel’s bottom to—well editing! Making a lot of calls as to what you want to read about, what’s hot and what’s not.

Overflow

Over the last couple of months you will have noticed how our overflow coverage is moving into our ‘Folks Offices & Orgs’ section. Sometimes I think that we could fill two issues a month but I suspect that for most readers, once a month is enough. It sure is for me!

SCADA

Deciding what goes in and what to leave out can be difficult. Our coverage started out in the exploration sector, but has evolved to encompass oil and gas e-business, corporate IT, GIS and pipelines. As the reservoir model is increasingly the focal point of the e-field, it seemed natural to check out the SCADA arena, to see how computerized data acquisition and control systems are being used to ‘drive’ assets harder and maximize value.

Refining?

But as we follow the SCADA thread—we end up in the refinery! Now I must admit that I have always considered refining to be off-limits to Oil IT Journal. All I know about refineries is that they look pretty at night and they smell bad. I used to think that while some domain boundaries might be blurred, at least refining is well demarcated and probably a good thing to avoid.

Off-topic?

So while deciding ‘what goes in and what goes out’ for this month’s edition, I initially had no difficulty in redirecting one press release to the bit bucket. The fact that BP had signed with Aspen Technologies for its simulation and optimizing suite was conveniently ‘off topic’.

Portfolio management

And yet, reading more closely, this software is not limited to engineering refining, but supports ‘model-based decision making across upstream, refining and chemicals’. I though that this was kind of noteworthy—after all, there is no reason to have a special kind of decision support software for upstream and another one for refineries. Fluid flow in pipes encapsulates the same kind of physics whether the pipes are vertical well-bores or to, within and from a refinery. So the Aspen/BP deal story made it in (see page 9).

COTS

Our big take-home from the IBC EU SCADA show was the growing significance of common off-the-shelf (COTS) hardware. SCADA control points are in the process of migrating from proprietary systems to internet-enabled COTS-based components. As we saw at last year’s SPE, wireless SCADA is powerful new technology for tying-in outlying data sources. All you need is COTS, the internet and electricity—the ‘COTS, ping and power’ of our title.

Carnegie-Mellon’s Coke machine

Once all your SCADA control points (and your subsea geophones, down-hole meters and fiber optics) are accessible through their own IP address, suddenly IT takes on a different aspect. I discovered this for myself a while back when experimenting with Visual Basic and some OCX controls for internet access. Believe it or not, it is possible, with about three lines of Visual Basic, to discover the ‘status’ of the Coca-Cola machine in the IT faculty of Carnegie Mellon university! This functionality has been available since the mid seventies—making it perhaps the earliest use of ‘web services’. Data sources with their own IP addresses are set to revolutionize corporate IT. Engineers and programmers need no longer be concerned as to whether systems are ‘integrated’. If they are IP-based, they are accessible, particularly to another kind of COTS—component software, whether in the form of development tools or the good old internet browser.

Process

As a final witness in the demarcation debate I would like to call on IBM. IBM has always refrained from giving its different upstream practices a very high profile, preferring to classify oil and gas a part and parcel of the ‘process industry’. Now if you are a geophysicist or a driller such a holistic view of the business is very hard to achieve.

Process

But once you are in production, you are part of the process—downstream from the field, everything is tied together. Follow one pipe and it leads to the treatment unit, on to the refinery and thence to the consumer. Gas production and distribution systems are particularly hard to demarcate as town-dwelling consumers are linked directly to offshore production facilities.

Pandora’s box

The move from reservoir model to the e-field may open a Pandora’s box for upstream IT as it becomes a part of the ‘process’. Fortunately, legacy systems elsewhere in oil and gas look ready for a spring clean too—according to the newly released study from Cambridge Energy Research Associates—see page 12 of this issue. COTS, ping and power are poised to breathe new life into the silo-less processes of the future. And Oil IT Journal will keep on tracking these technologies—even if we do steer clear of refining!

INT director Jim Velasco presented the INT WebViewer family of products. The current WebViewer lineup includes components for viewing well log and seismic data. As implied by the title, these components have been designed to provide a scalable, flexible, and secure n-tier data browsers in the most timely, efficient, extensible, and cost effective way possible.

COTS

The WebViewer family is designed as a Common off-the-shelf (COTS) solution leveraging a “Just in time” application deployment model. Here the viewer application is delivered to each client from a central application/data server. INT’s Enterprise Viewer offers cross platform client and server support, minimal configuration and deployment burden and JSP 1.2/Servlet 2.3 compliance. Velasco’s presentation concluded with a demonstration of INT’s Web LogViewer.

Trolltech Qt

INT’s experience as ‘widget’ (software component) provider to the main E&P vendors has shown that there are currently three main development environments for E&P applications: Java, C++/Qt and Microsoft’s .NET. INT supports all three—but is offering enhanced integration of its Carnac C++ Geotoolkit with Norwegian Trolltech’s Qt. Developers can now the Qt-Builder rapid application development environment. INT is developing Qt-Builder-enabled widgets which support cross platform Windows/Linux/UNIX deployment. Veritas has successfully deployed INT’s Qt widgets in its EXPOSE seismic viewer (see article).

Metropolis

Michael Glinsky described BHP Billiton’s use of Java-based distributed processing on Linux and data visualization of its ‘Metropolis’ simulated annealing algorithm for seismic inversion. The use of INT’s Java widgets allowed BHPB to move quickly from R&D prototype to production results, saving 25-30% of development costs with performance “rivaling C or Fortran”. BHPB uses a Seismic Unix ‘backbone’, INT Viewer, LINUX cluster with Load Sharing Facility (LSF) and an XML editor for parameter entry. Metropolis-based Baysian update of the seismic model is the lynchpin of system. A layer-based, prior model is refined consistently with the seismic response to forecast reservoir parameters.

.NET

INT project manager Roman Semenov decribed the ongoing port of GeoToolkit to Microsoft’s .NET architecture. .NET has proved to offer ‘excellent’ graphics performance, and operating system independence. J/GeoToolkit’s 190,000 lines of code have translated into 150,000 lines of .NET—over half ported without change. Development with Visual Studio for .NET was reported as fast and convenient—“We really enjoyed using the C# language”.

GIS Web Services

Bryan McKinley described how INT’s J/CarnacGIS map feature viewing and analysis framework can be combined with existing Java frameworks and INT components. INT’s OpenGIS-compliant data server improves GIS data homogenization from back-end tiers to clients. This technology generalizes feature collection querying abstractions with decoupled implementations to neutralize proprietary geospatial datastore differences.

Dulac

Jean-Claude Dulac (Earth Decision Sciences (EDS, formerly T-Surf) asked Why Qt? EDS’ GoCad was written in C++ and Motif, but X-Windows emulation on Windows is ‘unsatisfactory’. Qt offers a rapid port route with access to source code, good support and provision of Windows widgets on Unix. Qt also offers “excellent class design, great documentation, Qt Script for Applications (QSA) and XML support”.

Veritas has leveraged INT’s GeoToolkit in its Vega seismic processing package and is now benefiting from GeoToolkit’s use of Trolltech’s Qt cross-platform GUI development tool. Veritas’ ‘Expose’ seismic data viewer has been developed to run on both Unix and Windows platforms to support Veritas’ sales personnel who require laptop access to data for demonstration to clients.

Howell

Veritas’ Manager of Interactive Software Development Gill Howell observed, “Switching to Qt involved a rewrite of all of Veritas’ Motif GUI code but little change was required for code developed with the GeoToolkit.”

Expose

Expose functionality includes zoom, pan, scale color change of seismic data. Trace amplitudes can be measured and comparisons made between different sections. Expose is now undergoing testing in the Asia Pacific region. The system holds the equivalent of 100,000 kilometers of 2D data at 8-second record lengths. Veritas reports ‘outstanding’ graphical resolution and system performance. Expose can read data in Veritas’ native processing format, eliminating data conversion before visualization with off-the-shelf viewing tools.

Qt Designer

GeoToolkit display primitives are now integrated into Qt Designer, further simplifying the task of enhancing existing applications or writing new modules. Later, Expose will be applied to 3D data volumes and deployed in other regions.

OILspace is extending its OILwatch web-based content and communications platform to Pocket PC-based PDAs. OilWatch offers real-time news and pricing information from NYMEX, IPE, Platts, Dow Jones, Reuters and more. Users can also collaborate with colleagues in real-time from their desktop, laptop or while away from the office.

Strong demand

OILspace believes there is a strong demand for flexible, low cost access to commercial information while on the move. The OILwatch, already a web-based system, was tailored to fit the unique screen sizes of mobile devices and adapt quickly to support new oil content coming into the market.

PDA trial team

ChevronTexaco, ConocoPhillips, Hess Energy Trading, Kuwait Petroleum Company, Trafigura, Morgan Stanley and Scan Energy are all part of the current OILwatch PDA trial team. The trial gives market watchers a competitive edge by keeping them connected with the market.

Hellman

OILspace CEO Steve Hellman said, “OILwatch PDA is the latest of our software innovations specifically for oil professionals. This establishes OILspace well ahead of our competitors in terms of choice of content, diversity of delivery platforms and speed of implementation at the lowest possible cost.”

$ 195 per month

OILwatch was released in January 2002 and has since grown to a global customer base of 120 firms and over 650 users. The service starts at $195 per month.

Landmark Graphics Corp. has announced the release of its Corporate Data Archiver (CDA), a web-based data management tool for storing and protecting upstream digital assets. CDA provides rapid retrieval of critical data and knowledge, and reduces the need to rework projects or reacquire data due to lack or loss of information.

KM product line

CDA is the first release in Landmark’s new ‘Corporate Data and Knowledge Management’ product line. CDA stores definitive versions of data, information and knowledge, such as prospect evaluations or reservoir studies and is designed to visually record a project, automatically creating a snapshot of selected data. Snapshots include rich, contextual metadata about projects and eliminate the need to restore project data ‘just to see what’s there.’

Key word

A user-friendly interface allows users to locate archived information by entering a key word or phrase. CDA consolidates project data and documentation for output to Landmark’s PetroBank Master Data Store, where project archives can be securely managed, or saved to corporate IT disk and tape systems.

Conoco

Subsequent releases of CDA will support archiving with industry standard formats such as SEGY, LAS, WellLogML and third-party products. CDA leverages technology developed by PGS (the Slegge data store) and from Exprodat—which developed a Project Archiver for Conoco back in 2001 (Oil ITJ Vol 6 N°12).

INT and OpenSpirit are to work together to provide consulting solutions to the upstream oil and gas market, leveraging their previous experience on the OpenSpirit integration framework. Mechdyne has installed the world’s first digital active 3D stereo rear-projected, curved-screen display for Landmark’s Dubai office. The system can display multiple data windows from different video sources on top of a background application. ExxonMobil is planning to extend its worldwide license for Peloton’s WellView to include drilling data management and morning reporting. � John Wilson is the new president and CEO of Trade-Ranger and Cindy Hassler is VP marketing. Trade-Ranger is to open its European headquarters in Brussels in Q2 3003. Bertrand du Castel will not be seeking re-election to the POSC Board this year. du Castel is now head of engineering at SchlumbergerSema’s smart card division. A new website—peteng.com offers petroleum engineers, geologists and technologists free calculators for ‘medium-complexity’ computations, graphics and data access. Beijing Huayou Gas Corp. is to deploy MRO Software’s , Maximo to manage its 1,098 kilometers gas pipeline operations. � CGG has opened a regional computing hub in Kuala Lumpur. The hub will be managed by CGG Asia Pacific MD Azmi Ismail.Kader Sakani is general manager of Lynx Information Systems’ new Algeria office. Lynx Geodata offers exploration data scanning, seismic, well and map vectorization, tape copying and reformatting and GIS products. � Calgary-based LogTech has a new release of its well log application LOGfx. The software displays LAS and image log data simultaneously and color shading of digital logs for interpretation.

BG (ex British Gas) has selected Schlumberger’s Inside Reality VR system for its Interactive Visualization Center (iVC), located in Reading, UK.

Setrem

BG Petrotech business relationship manager Mark Setrem said, “Inside Reality (IR) is BG’s software of choice for the advanced workflows of our iVC. “IR gives us the ability to plan our wells using seismic, geological and dynamic reservoir models interactively, in true virtual reality. The combination of the 3D-spatial and interactive well trajectory planning with IR represents a step-change in our workflow processes.”

Geosteering

IR is claimed to be the only commercial VR software for interactive well planning and real-time geosteering. Users navigate and interact with the data by walking, pointing and grabbing or by using a 3D data wand instead of keyboard and mouse.

Gras

IR’s Rutger Gras added, “BG can expect returns on investment from reduced decision making time, improved well performance and increased recovery”.

Venezuela state oil company PDVSA is implementing Grid technology from IBM and Platform Computing to improve its reservoir simulation applications. IBM is working with PDVSA to ‘quantify the significant business benefits’ of the technology.

Augustin

PDVSA Technical Operations Manager Emilio Augustin said, “PDVSA is excited about this new technology and its potential across the enterprise.”

DataSynapse

IBM also announced new agreements with Grid middleware vendors Platform Computing and DataSynapse both of which will play key roles in helping IBM deploy Grids in the enterprise. IBM Grid guru Tom Hawk said, “The commercial market for Grids is set to expand, particularly with the introduction of the Globus Toolkit 3.0, the first Open Grid Services Architecture (OGSA)-compliant Grid middleware.”

TotalFinaElf (soon to be re-baptized ‘Total’) has awarded a major telecommunications contract to France Telecom unit Equant. The ‘Contact’ secure IP-based virtual private network (VPN) will link 1,500 TFE sites in 75 countries with local bandwidth of up to 655mbps.

Chalon

TFE IT director Philippe Chalon said, “The newtork is the backbone of our information system and has to be robust, secure and capable of evolving as with business needs. We are seeking to rationalize IT infrastructure, to distribute applications and corporate data and to share knowledge between domain experts. Equant’s IP VPN provides bandwidth on demand between our 1,500 sites throughout the world.” The network, which uses the Multiprotocol Label Switching (MPLS), will be fully operational in 18 months.

€ 30 million

The network offer TFE secure internet and intranet access and data including voice over IP. A report in Les Echos valued the contract in the range of € 30-60 million over a three year period. France Telecom’s Olivier Voirin told Les Echos, “Data transmission costs halve every two years, but this is compensated for by ever-increasing data volumes.”

VisionMonitor Compliance Intelligence (VMCI) proactively tracks, logs, analyzes and forecasts environmental compliance operations. The system is VisionMonitor’s latest and most advanced environmental management information system (EMIS) solution and was developed in response to the increasingly onerous activity of managing and monitoring compliance with HSE regulation in the energy industry.

Mantor

VisionMonitor chairman Torgeir Mantor said, “The energy industry is still subject to heavy regulation. HSE and financial regulations continue to change, making it difficult for operators to stay current with the requirements of federal, state and local regulators. Many companies make extensive use outside consultant time to assure compliance with these regulations.”

.NET

VCMI runs on Microsoft’s .NET and comprises modules for data gathering, event tracking, emissions monitoring, predictive modeling and compliance assurance. The web-based software offers portal views tailored to plant (Plant Vision) or corporate (Corporate Vision) workflows. More from www.visionmonitor.com.

Stuart Robinson reported back from the 4th National Data Repository meeting held in Stavanger last year. Robinson defines a National Data Repository (NDR) as a place “where data sets can be shared amongst partners, regulatory bodies and other interested parties”. Various rationales for NDR establishment have been invoked – cost reduction, national archive and the requirement to attract new entrants. Robinson asked if NDR’s deliver business value – a debatable point. Strict business value in terms of cost savings may be hard to achieve, but may not be really necessary in view of the greater overall benefits. The one size fits all approach definitely does not apply to an NDR. Different data release legislation, data ownership, culture and oil province maturity make for different objectives and approaches. Oil company users should realize that the NDR is here to stay and will benefit those who ‘get involved’.

The Pilot Data Initiative

CDA MD Malcolm Fleming described the UK Government’s Pilot Data Initiative which was established last year to develop a data access, storage and National Archive model for UK data. Participants include DTI, UKOOA, BGS, CDA service companies and consultants. The project sets out to resolve ‘deficiencies’ in existing data legislation and management. In general, there is ‘confusion around ownership, rights, obligations and liabilities of license data’. The solution integrates existing repositories (DEAL, CDA) and proposes a new National Archive. The resulting ‘life-cycle’ model envisages holding data in a repository during the ‘active phase’ of its lifecycle. Upon license relinquishment the data moves into a National Archive – to be maintained by the BGS. This move relieves licensees of their obligations to keep data ‘in perpetuity.’ Concomitant with this program is an overall reduction in the release period from the current 5 to 4 years. A trial carried out on data from Kerr McGee’s Hutton field is currently underway.

Landmark

Jon Lewis regards IT as a lever for value creation. For Landmark, the hosting of data and application software represents a new business model as witnessed by Landmark’s new UK data portal ukcsdata.com. When operational, the portal will provide access to multiple commercial and other data sources and will be of particular benefit to new entrants by supplying comprehensive data through new leasing arrangements, lowering barriers to entry and broadening the market. Deployed in-house, hosting centers become ‘hubs’ or ‘MegaCenters’ in Shell’s terminology. The latest release of Landmark’s Surf and Connect leverages ESRI’s technology to simultaneously view Norwegian wells in DISKOS, UK CS wells out of CDA and in-house data in GeoFrame or OpenWorks. ENI now deploys a Landmark portal in-house at its Milan-based hosting center. This supports workers in Europe and Kazakhstan with some 140 application types. Lewis believes that companies the size of ENI and Shell are ‘a market unto themselves’—with the critical mass required for internal implementation.

Shell

Erik van Kuijk—head of Shell Expro’s Discovery data clean-up project—retraced the philosophy that Shell’s North Sea unit has developed around data management. The tenets of van Kuijk’s approach are the ‘KID’—Knowledge Information Data continuum and ‘entropy’—the notion that management involves the ‘cooling down’ from chaotic initial states of data and business processes. This approach has now been extended to ‘software portfolio entropy’—where van Kuijk notes that “the consistency and connectivity of every software portfolio deteriorate over time unless effort is spent”. Expro has rationalized its software portfolio into loosely coupled domains (subsurface, wells, production etc.). These are progressively being linked together into ‘active workflows’ which are constrained and coupled through the use of a consistent catalogue of data attributes. Catalogues are ‘hard-wired’ into the workflows so that users ‘don’t have to worry about the consistent terminology’. There is none the less prescription—people will be ‘forced to adopt and their behavior measured’.

Paras

Alan Smith outlined Para’s new ‘Once’ concept of ‘one-time’ data management, new ways of working, consistent and correct data all of which is economic and efficient! The business drivers behind ONCE are the aging workforce which means that more is being asked of fewer people. Paras has applied the ONCE methodology in a project for Premier Oil. Here various domains have benefited from the ONCE rationalization. HR reporting for instance has been streamlined, and information is now available through a ‘self service’ web portal. Similar rationalization is in progress for production reporting – where ‘spreadsheet-based’ reporting has been eliminated.

ExxonMobil

Dawn De La Garza described how ExxonMobil is ‘learning to swim in a sea of data’. Nuggets of knowledge are being lost in an ocean of data. For ExxonMobil, the answer is to capture key interpretations and archive or delete unnecessary data. Standards are important too—De La Garza cited issues with different workstation formats, but sees help coming from projects such as POSC’s Practical Well Log initiative and WITSML for rig site data – a ‘great effort’. ExxonMobil’s Upstream Technical Computing organization was formed to deliver and support a standard technology system offering seamless integrated technical IT for all upstream professionals. ExxonMobil personnel can work on projects irrespective of their location. Procedures, people and technology have been standardized and central services are leveraged for data entry and loading. While there are no data management ‘silver bullets,’ standards can be the ‘silver lining’—facilitating partner, vendor and service company interaction.

Schlumberger

Today, multiple in-house and inconsistent stores cohabit with external data centers. In this heterogeneous world, Schlumberger’s Steve Hawtin recommends understanding ‘what works for you’ – and defining long term goals. Schlumberger Information Solutions is ready to help out with consultancy services, documentation of workflows and planning. Key to the SIS offering is the Master Data Sore – now redefined as ‘a collection of repositories holding approved data and connecting business processes’.

Web services

Shell’s Richard Mapleston described the particular security challenges of moving upstream data. Key requirements are for secure, shared access to documents and applications. Shell is experimenting with Web Services as providing secure automated information flow both internally and with external partners. In 2001 a pilot was initiated with the UK DTI and IBM. Last year Shell Expro implemented web services in its internal Discovery.com portal. For 2003, upstream web services will be implemented with SAP portals. Mapleston believes that security issues can be successfully managed by adopting and trusting common standards. Shell is working on ‘Pathfinder’ developments testing digital signatures. Consultant Niall Young sketched a hypothetical use of web services interaction between oil companies and government. A complete e-government implementation would however be complex and expensive to implement. A web service which ensured well header nomenclature consistency between operators, CDA and the DTI would be more realistic. Young believes that standard data validation such as the supply of DTI well numbers should be supplied via generic web services.

Exprodat

Gareth Smith reckons that around 80% of E&P data has a spatial component and E&P professionals have come to expect map views of their data. GIS implies a considerable overhead in spatial data sets maintenance. Smith believes that ‘few companies realize the full business value of their spatial data and systems’. Exprodat therefore proposes a ‘cookbook’ GIS strategy comprising the provision of simple tools for the masses, a focus on ArcIMS implementation before the desktop. Content provision is important and should expose high value corporate data and trusted data sources such as DEAL and PetroView.

Instant Library

Paul Duller described disasters and their consequences before offering guidelines for prevention and recovery. Risk assessment maps the likelihood of disaster against severity. Risks are documented and prioritized. Information loss prevention relies on standards like BS 5454 for library storage. Duller stresses the practicalities of data preservation. Data should be stored on upper floors (not in the basement), suitable racking should be used that diverts falling water and assets should be kept away from water pipes and damp. While disasters are extraordinary events, they are ‘sufficiently frequent and similar to be amenable to planning and prevention’.

Flare Consultants

Paul Cleverley showed how information management (IM) can be integrated into the corporate risk control framework. The same consequence/likelihood mapping as proposed by Duller can be applied to information management. Cleverley describes IM risks as including poor learning, knowledge loss and information quality, poor accessibility and infrastructure failure. Flare’s work with Shell Expro on the Discovery data cleanup project has demonstrated the importance of a common vocabulary in tying together the different parts of the E&P workflow. Catalogue-defined terminology can be further leveraged by ‘process management’ to ensure that ‘key products are published to the right places’. Once E&P terminology has been ‘embedded’ in the system, graphical drill down across multi-domain data sets becomes possible. Virtual team working between regional offices has also been enabled and key remarks and audit trails are now published to the corporate ‘memory’.

This article has been abstracted from a 16 page illustrated report produced as part of The Data Room’s Technology Watch service. For more information email info@oilit.com.

Chris Morse (Honeywell) traced the history and differences between Distributed Control Systems (Honeywell’s specialty) and SCADA. Today it is possible to build hybrid DCS/SCADA systems and benefit from reduced hardware costs . In an integrated DCS/SCADA system all points, displays and alarms can be viewed anywhere. Information can be subscribed to and published to all users. Web technology is ‘taking the Control and Acquisition out of SCADA’. Morse showed data query of the Forties Pipeline System—an on-line historian holding pipeline data from the past two years. This provides desktop users with access to trend data and historical data of past engineering configurations. Such integration offers asset managers enhanced equipment monitoring, predictive maintenance, demand forecasting and leak detection. OLE for process control is an important enabler. The biggest benefit of web technology according to Morse is more and more accurate information.

LogicaCMG

Steven Mustard (LogicaCMG) believes that SCADA (in use for thirty years) should be integrated more tightly with corporate IT. Common off-the-shelf (COTS) hardware and software can be economically rolled into solutions, reducing costs and maintenance. Standards encourage competition between vendors—further driving down costs. Standard development tools can be used and acquired data can be distributed more widely across the enterprise. COTS components can also positively impact system availability, reliability and safety. A range of internet security tools (firewalls, encryption etc.) can be used to secure such integrated systems.

ABB

Folke Dahlfors (ABB) vaunted the merits of open systems, citing IEC 61968 from the International Electro technical Commission along with other generic IT standards such as the W3C, and more vertical standards like OPC and the OMG CCAPI TF. ABB has developed a suite of power control IT building blocks—Aspect Objects— which leverage standards to link enterprise IT with SCADA.

Invensys

Francesco Cammarata (Invensys) notes that the SCADA arena is experiencing rapid change due to evolving technology, deregulation and new ways of working. The future lies in integrated systems which encompass field automation, advanced SCADA, CIS/CDR and distribution/optimization software. Cammarata argues that SCADA incumbents are best placed to manage these changes. Invensys offers a vertical solution from ‘plant to portal’, or from ‘data source to information store’. Invensys vision for 2005 is for ‘completely integrated’ e-business, ERP and Automation business solutions. This is to be achieved through Invensys’ ArchestrA integration platform.

Ruhrgas

Peter Götzen (Ruhrgas AG) described the Ruhrgas Dispatching Application and Information System (DAISY), built by Berlin-based software house PSI. The PSI SCADA system captures data from different domains into an Oracle database. Messaging leverages the EDIGAS (GASDAT) standards as well as import through XML data files. The system uses gas grid simulation software SIMONE from LIWACOM and Simone Research. An in-house developed application handles transport and trading contracts.

“There is an interesting demographic shift from previous SCADA conferences in that a majority of attendees used to hail from the electrical engineering community. At the IBC EU SCADA conference, a show of hands revealed that half of those present were from IT. As the industry moves from silo-like systems with relatively little interconnection, to ubiquitously connected, web-based data availability, the emerging problem is one of data ownership.

Gospel truth?

Today or in the near future it will be potentially possible for accountants to access flow data from a production asset. But what will such data mean? There is a danger that ‘data’ will be considered as ‘gospel truth’ and used inappropriately. The solution comes from new messaging systems which allow for data to be tagged with information about ownership and quality. In old SCADA and DCS systems data was sent as groups of binary bits whose meaning was only known to proprietary software and systems.

OLE for process control

Now information is tagged—using a variety of more or less proprietary formats (as yet there is no leading tag format although the OLE for Process Control (OPC) Foundation is making a claim for such). All this is coming to a head as affordable primary level devices get smarter and cheaper. The industry has to an extent been ‘caught on the hop’ and is now waiting for the next step beyond FieldBus.”.” �

eLynx Technologies is using its MeterLynx solution to automate St. Mary Land and Exploration Company’s pipeline delivery points. eLynx’ built St. Mary’s MeterLynx solution to allow for hourly polling of operational data and collection of electronic flow meter files. St. Mary can now view current flow rates, pressures, and other values over the internet along with stored historical production data.

Willson

St. Mary LLE VP Kevin Willson said, “There is obvious value to St. Mary to know real-time production numbers on our pipeline delivery points. eLynx offers a simple, reliable and cost- effective solution that gets us this critical data”.

Text message

St. Mary uses eLynx’s database alarm callout feature, which sends text messages, via mobile phone, to operations personnel when an alarm occurs. Other notification options are pager, phone and e-mail when a well is down. MeterLynx is able to do all of this using wireless communications to transmit data from the well to the eLynx Data Center. More from www.elynxtech.com.

Schlumberger has put its cementing, stimulation and coiled tubing field data handbook online. The new online version, the ‘i-Handbook’, is available for free download at www.slb.com/oilfield/ihandbook/.

35 years old

Schlumberger first published its Field Data Handbook 35 years ago. It has since become an industry standard for petroleum engineers - providing the key data engineers need to drill and complete a well.

Keese

Schlumberger Oilfield Services’ Roger Keese said, “Using the i-Handbook engineers are able to do in-field quality checks, basic materials formulations and quick cementing computations to expedite drilling the well. This advanced resource includes calculators for fast and consistent computations and an exhaustive tubulars database with dimensions and mechanical properties data.”

ZettaWorks has just announced a new wireless Universal Applications Messaging (UAM) practice designed to extend e-business to wireless devices. UAM is built on ZettaWorks’ expertise in integrating TIBCO applications, coupled with UnBound Technologies’ wireless business platform.

Pash

ZettaWorks COO Tom Pash said, “Wireless UAM revolutionizes business, because it allows the extension of business processes to the distributed enterprise.”

McMichael

According to UnBound CEO Chase McMichael, “Our studies indicate that instant message accounts for business purposes will increase more than 200 percent over the next four years.” More from unboundtech.com—notably a paper on ‘EnronOnTheGo’ - a ‘perfect example of a bidding interaction via the web’... err - up to a point!

BP has signed a five-year license agreement with Aspen Technology, Inc. to extend its use of the Aspen Engineering Suite (AES) for process simulation, design and performance optimization. The deal standardizes simulation technology across BP’s global upstream, refining and petrochemical businesses. The new agreement brings together AspenTech’s process modeling technology and recently acquired technologies from Hyprotech—used in BP for over a decade.

Flexibility

AspenTech’s engineering tools will give BP greater flexibility in executing major projects across the different business units—reducing internal development and engineering efforts and providing ‘significant economic benefits’ through increased efficiency of its projects.

Hutchinson

BP Group Engineering Manager Gordon Hutchinson said, “BP is a long-term user of both AspenTech and Hyprotech technologies, and this agreement enables us to consolidate on a single engineering solution.”

McQuillin

AspenTech president David McQuillin added, “The addition of Hyprotech’s products to our Engineering Suite provides engineering solutions that enable model-based decision making across upstream, refining and chemicals.”

Icarus

Under the new agreement, BP will have access to several additional features of AES, such as Aspen Icarus technology for economic evaluation, permitting the company to assess the economic viability of alternative process designs prior to capital commitment.

Houston-based Geodynamic Solutions, Inc. has just released Spatial Search Engine (SSE), a web-based, search technology that helps users locate information through a map interface. SSE uses a proprietary GeoFilter to intelligently search for information on both the internet and internal corporate file systems. SSE includes an adapter for accessing information stored in Documentum—other document management systems will be added ‘real soon now’.

Office

SSE handles data in a variety of formats including Word, Excel, PowerPoint, PDF, and HTML. SSE also works with all ESRI-based mapping systems including ArcGIS, ArcIMS, and Petrolynx Web Maps. SSE’s open and extensible architecture allows integration with other search engines supporting XML/SOAP and can integrate Enterprise Content Management (ECM) software and will support MS-Index Server searches.

Secure

SSE security includes built-in encryption and can be integrated

with IIS/NT or LDAP authentication. Spatial Search Engine can be licensed on

a corporate or user basis in both a hosted or intranet environment. More from

www.geodynamic.com. �

The Ministry of Energy and Mineral Resources of the Republic of Kazakhstan has awarded Landmark Graphics Corp. a contract for the design, development and implementation of a National E&P Data Bank (NDB). The core technology to be deployed in the Kazakh NDB is Landmark’s PetroBank networked multi-client data management system.

Uzhkenov

Kazakh energy ministry representative Bulat Uzhkenov said, “This agreement gives Kazakhstan an opportunity to create a quality-controlled and highly secure database for the management of its national asset of oil and gas data and to establish a government-industry National Data Bank operation similar to those in the UK, Norway and Brazil. The NDB will serve as a national archive and by its nature attract inward investment.”

Gibson

Halliburton Energy Services president and CEO John Gibson added, “Kazakhstan is rapidly becoming a major producer and exporter of hydrocarbons. The Ministry’s decision to leverage Landmark’s data management solutions and experience is an indication of the confidence in PetroBank data hosting and management.”

Shell has selected Halliburton unit Magic Earth’s GeoProbe to equip its global visualization centers and on their geoscientists’ desktops. The agreement is said to be ‘one of the largest commitments to date by an oil and gas company for Magic Earth’s technology.’

Ching

Shell director of E&P technology Paul Ching said, “GeoProbe will play an important role in our visualization and interpretation work. The addition of GeoProbe to our subsurface toolbox will be a great benefit to Shell’s interpretation experts. GeoProbe, combined with other third party tools, and our own unique proprietary technology will ensure that Shell’s technology capability remains at the forefront of the industry.”

Zeitlin

Magic Earth president Mike Zeitlin added “We look forward to Shell using GeoProbe to add vital new reserves to the world’s inventory while maximizing the value of their technology investment through significantly reduced cycle times and improved accuracy.”

Houston-based well planning and casing design software house T H Hill Associates is to offer its software via Petris’ ASP Service - PetrisWINDS NOW! Four new drilling engineering applications will now be available of a monthly subscription basis.

Altizer

Shawn Altizer, business development manager with T H Hill explained “WellOptimizer, CasingDesigner, Rig Toolbox and Drillstring Toolbox will be bundled into a single drilling engineering suite to allow a user access to all the applications with a single login. Our alliance with Petris provides both an innovative distribution channel for our software tools and the opportunity to reach target markets more effectively.”

Pritchett

Petris CEO Jim Pritchett added, “T H Hill’s applications greatly enhance the solution set we can provide our clients and complement our growing body of oil and gas software and services.”

Calgary-based International Datashare Corporation (IDC) announces the results of a Summary Judgment related to a lawsuit between its Riley Electric Log unit and John S. Herold Inc. A federal court in Houston declared that Herold may not reproduce or re-market the Riley logs it had acquired for its libraries since 1995. This result grants protection to Riley for its 3 million US well logs.

Stein

IDC president Norm Stein said, “This outcome gives us tremendous confidence that our collection of well logs is protected and that unauthorized copying will not be an accepted practice. Riley has served the US oil and gas industry for over 50 years.”

Energisite.com

Riley offers a number of log related products including hardcopy, raster, digital logs. These can be ordered through www.energisite.com . Riley also offers log management software and services to allow companies to manage their company log database.

Landmark Graphics Corp. has just released its DecisionSpace suite of ‘next-generation’ directional well planning software. The integrated suite, comprised of Asset-Planner, TracPlanner and PrecisionTarget, will allow asset teams to plan field development paths for multiple platforms, leveraging advanced well-path planning and workflow-based technology.

Lane

Landmark president Andy Lane said, “Our DecisionSpace well planning suite will reduce field development cycle times and allow for more accurate wellbore placement, increasing production.” DecisionSpace’s common visualization environment integrates with OpenWorks and 3D DrillView.

Lotsberg

Statoil has been using PrecisionTarget for well planning on its lead assets. Oddvar Lotsberg, senior engineer for Statoil said, “Statoil applies PrecisionTarget on all Heidrun field wells, for planning and real-time operations. The tool and associated work processes help realize the value of our new real-time operational center”.

Halliburton Energy Services has teamed with CapRock Services Corp. for management and expansion of its telecommunications infrastructure, HalLink. Halliburton’s satellite telecommunications network currently reaches more than 200 global work sites.

Gibson

HES president John Gibson said, “Our global network provides real time operations capability to our customers in support of our reservoir solutions strategy. CapRock is taking this high-bandwidth satellite telecommunications technology and service and will be expanding it to reach a wider commercial base.”

Shaper

CapRock CEO Peter Shaper added, “This relationship opens up extensive opportunities to share this network with other customers, providing them with a value unmatched in the industry.” HES will remain actively involved in the management of its real-time telecommunications network for its customers.

In our piece on the PPDM Association’s XML-based data exchange project last month, we accidentally ascribed the acronym ‘DAEX’ to the project. Worse still we misquoted Kelman’s Greg Hess as using it! Steve Hawtin, integration ‘champion’ with Schlumberger Information Solutions put us right, “DAEX is a Schlumberger trademark acquired from Oilfield Systems along with the DAEX software. DAEX actually predates XML, but the underlying design always included the concept of a readable ‘standard’ encoding for data. When the XML definition came out Oilfield Systems extended the support libraries to allow customers to use it. The latest release (DAEX 4.2) still accepts the ASCII and Binary encodings but most customers stick with the XML.”

UK-based SpectrumITech (the I.T. Division of Spectrum Energy and Information Technology Ltd.) and its local partners Saudi Geophysical have been awarded a data management contract by Saudi Aramco. The two year contract was awarded to Saudi Geophysical with technical and managerial expertise to be provided by Spectrum.

20 million documents

The contract encompasses the rationalization, indexing and electronic capture of up to 20 million documents sourced from a number of different Aramco locations. Documents vary in size and format. Those in poor condition are being optically enhanced prior to scanning. The captured images, together with their associated metadata are being up loaded into Aramco’s 35,000 seat OpenText LiveLink repository.

Lucas

Spectrum’s proprietary data enhancement and capture software and associated procedures are now fully operational. Spectrum Operations Manager Jon Lucas said, “The award of this contract will allow Aramco to benefit from Spectrum’s considerable experience with similar large scale document rationalization and capture projects on behalf of a number of UK and European oil companies. We see this contract as a spring board for developing Spectrum’s business throughout the Gulf region”.

TGS-NOPEC and wholly-owned subsidiary, A2D Technologies, are moving their data center to Sprint E-Solutions co-location hosting facility in Denver. The move will bring improved security, redundancy and will centralize of data and applications. TGS Nopec’s well log assets and internet applications will benefit from Sprint’s Tier 1 Internet backbone connectivity. The high-tech facility offers redundant power supply, automated environmental monitoring, intrusion and access control, and 24x7 staffing.

Log-Line Plus!

Initially, A2D’s Log-Line Plus! e-commerce portal for well log data will be hosted at the new facility but other data types from A2D and TGS-Nopec will be added at a later date. Bandwidth to and from the facility is 10 mbps – but this can scale on demand.

Hicks

TGS-Nopec CTO David Hicks said, “LOG-LINE Plus! is the first step in our strategy to provide our customers with access to the data they need to be successful in the most secure environment available. We will continue to deploy additional data types through this enterprise and provide an integrated data mechanism for access and delivery of crucial data for the oil and gas industry”.

Linde

Sprint VP sales Peter von der Linde said, “Helping customers simplify their information management is what Sprint is all about. We’re helping A2D achieve its business objectives by making this business-critical information more available and reliable. Our E-Solutions Centers reflect Sprint’s ability to give customers a new level of value beyond collocation and hosting. Customers can now come to us at any stage of a business challenge and get complete customized or pre-configured internet-based solutions for their specific needs.”

A new study from Cambridge Energy Research Associates (CERA) reveals that ‘expanding the use of new-generation digital technologies can potentially increase world oil reserves by 125 billion barrels’ – an amount greater than Iraq’s current reserves.

Severns

CERA research director Bill Severns said, “Five key technologies—remote sensing, visualization, intelligent drilling and completions, automation and data integration—will be the core of a new, vastly improved set of tools that will enable energy companies to see reserves more clearly, plan optimal drilling and production strategies, and manage operations more efficiently.”

Braxton

The report “The Digital Oil Field of the Future: Enabling Next Generation Reservoir Performance” was undertaken in collaboration with more than thirty energy and technology companies, including lead sponsors Braxton (formerly Deloitte Consulting), Landmark Graphics Corporation, and Intel Corporation.

Cooper

Braxton oil and gas VP Dick Cooper added, “Achieving the vision of the digital oil field will require the alignment of strategy structure, culture, systems, business processes and, perhaps most important, behavior.”

4D seismics

4D, time-lapse seismics was singled out in the study as a technology capable of improve incremental recovery by from 3% to 7%. Other ‘significant’ tools include gravity surveying, electro-magnetic monitoring, permanent geophones and visualization technology.

SCADA

According to the study, another key technology is SCADA (supervisory control and data acquisition) which was singled out as an area in which this digital evolution is likely to occur. More from www.cera.com.